-

Notifications

You must be signed in to change notification settings - Fork 0

Expand file tree

/

Copy pathdirectory.json

More file actions

472 lines (472 loc) · 147 KB

/

directory.json

File metadata and controls

472 lines (472 loc) · 147 KB

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

292

293

294

295

296

297

298

299

300

301

302

303

304

305

306

307

308

309

310

311

312

313

314

315

316

317

318

319

320

321

322

323

324

325

326

327

328

329

330

331

332

333

334

335

336

337

338

339

340

341

342

343

344

345

346

347

348

349

350

351

352

353

354

355

356

357

358

359

360

361

362

363

364

365

366

367

368

369

370

371

372

373

374

375

376

377

378

379

380

381

382

383

384

385

386

387

388

389

390

391

392

393

394

395

396

397

398

399

400

401

402

403

404

405

406

407

408

409

410

411

412

413

414

415

416

417

418

419

420

421

422

423

424

425

426

427

428

429

430

431

432

433

434

435

436

437

438

439

440

441

442

443

444

445

446

447

448

449

450

451

452

453

454

455

456

457

458

459

460

461

462

463

464

465

466

467

468

469

470

471

472

{

"pages": [

{

"title": "Non-Lazy RURQ Data Structure",

"summary": "Learn about a completely bottom-up and iterative approach to the Segment Tree!",

"tags": [

"hackpack",

"programming",

"data-structure"

],

"date": 1759672602000,

"cover": "https://visualgo.net/img/png/segmenttree.png",

"hidden": false,

"slug": "non-lazy-rurq",

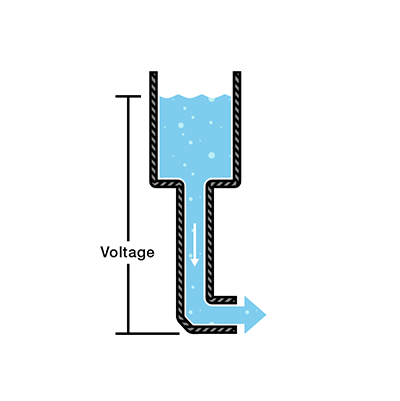

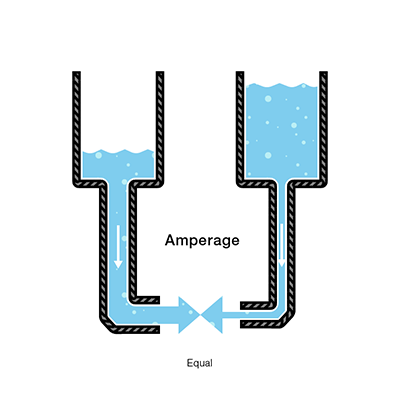

"content": "In this article we want to build a fast, array-based **data structure** that supports:\n\n- **Range add**: add a value `v` to every element in a contiguous interval `[l, r]`.\n- **Range sum**: query the sum of all the elements inside a contiguous interval `[l, r]`.\n\nThe implementation is **iterative / bottom-up**, storing nodes in linear arrays, and uses **immediate per-node additions** that represent a *per-element* increment for that node\u2019s whole segment. The code is designed for clarity and speed in competitive programming use.\n\nThe advantages of this implementation are that it is relatively quick to implement and also provides the speed of writing an iterative array-based segment tree with minimal memory manipulation. This data structure has been tested across several problems and has been proven to pass most time constraints where the traditional recursive node-based segment tree even with lazy propagation fails.\n\nThe complete implementation of this data structure can be found here: [github.com/BooleanCube/hackpack](https://github.com/BooleanCube/hackpack/blob/main/content/data-structures/FastLazy.h)\n\n---\n\n# 1. Storage\n\n\n\nThe structure stores data in contiguous arrays (vectors):\n\n- `n` \u2014 number of elements in the array (constructor parameter).\n- `tree` \u2014 a vector of length `2*n` that holds the **segment sums** (one entry per node). Node indices used are `1 .. 2*n-1`. Index `0` is unused.\n- `lazy` \u2014 a vector of length `2*n` that stores **per-element pending additions** for the node\u2019s whole segment. (`lazy[idx]` means \u201cadd this value to every element in `rng[idx]` when/if children are visited\u201d.)\n- `rng` \u2014 a `vector<pair<int,int>>` of length `2*n` mapping `idx -> [L, R]` (inclusive, array indices) for the node `idx`. This lets us compute segment length quickly: `len = R - L + 1`.\n- `vt` alias \u2014 `using vt = vector<T>;` for convenience.\n\n**Indexing rule (bottom-up layout):**\n\n- Leaves are at indices `n .. 2*n-1`, and leaf `idx = n + i` maps to array element `i` (0-indexed).\n- Internal nodes are `1 .. n-1`.\n- Root is index `1`.\n\nThis design avoids recursion during updates/queries and is friendly to iterative algorithms.\n\n---\n\n# 2. Construction\n\n\n\nThe constructor must:\n\n1. allocate `tree`, `lazy`, and `rng` vectors,\n2. fill `rng` for all node indices so we can compute node lengths and map nodes back to array indices.\n\nA safe and simple `rng` construction is recursive, starting at the root index `1`. For node `idx`:\n\n- If `idx >= n` \u2192 it's a leaf; `rng[idx] = {idx - n, idx - n}`.\n- Else \u2192 recursively build children and set `rng[idx] = { rng[left].first, rng[right].second }`.\n\n**Corrected constructor & `_construct` snippet**\n\n```cpp\ntemplate <class T>\nstruct segtree {\n using vt = vector<T>;\n const int n;\n constexpr static T def = 0;\n vt tree, lazy;\n vector<pair<int,int>> rng;\n\n segtree(int N) : n(N) {\n tree = vt(n<<1, def);\n lazy = vt(n<<1, def);\n rng = vector<pair<int,int>>(n<<1);\n _construct(1); // fill rng[1..2*n-1]\n }\n\n pair<int,int> _construct(int idx) {\n if (idx >= n) return rng[idx] = { idx - n, idx - n };\n auto L = _construct(idx << 1);\n auto R = _construct((idx << 1) + 1);\n return rng[idx] = { L.first, R.second };\n }\n\n // ... rest of implementation follows ...\n};\n```\n\nNotes:\n\n- The implementation works for any `n >= 1` \u2014 `rng` stores exact ranges even when `n` is not a power of two.\n- `tree` and `lazy` are initialized with `def` (0 for sums).\n\n---\n\n# 3. Range update (how it works)\n\n\n\n**Public API:** `update(l, r, val)` with `0 <= l <= r < n`. Internally we transform to node indices `l += n; r += n;` and run `_incUpdate(l, r, val)`.\n\n**High-level idea (bottom-up / iterative covering):**\n\n- We pick a minimal set of whole nodes whose segments exactly cover the update interval `[l, r]` using the standard iterative segment-tree trick:\n\n - If `l` is a right child, we take node `l`.\n - If `r` is a left child, we take node `r`.\n - Then shift `l` and `r` to their parents (`l >>= 1; r >>= 1`) and repeat until they meet.\n- For each chosen node `idx`:\n\n 1. we apply the *total* addition to `tree[idx]` and *also* add that total to all ancestors so `tree` remains consistent; and\n 2. we record the per-element lazy increment in `lazy[idx]` so that descendants (if read later) can compute ancestor contributions.\n\nThis two-step approach avoids eagerly pushing lazies down; it keeps `tree` consistent by updating ancestor totals, while `lazy` retains the information required to reconstruct ancestor contributions for partial queries.\n\n**Important fix (avoid undefined behavior):** do **not** write `lazy[l++] = op(lazy[l], val);` \u2014 that uses `l` twice with side effects and is undefined in C++. Increment/decrement must be separate statements.\n\n**Core update routines (corrected)**:\n\n```cpp\nvoid update(int l, int r, T val) { _incUpdate(l + n, r + n, val); }\n\nvoid _incUpdate(int l, int r, T val) {\n for (; l < r; l >>= 1, r >>= 1) {\n if (l & 1) {\n _updateLazy(l, val);\n lazy[l] = op(lazy[l], val); // safe: update lazy first, then advance\n ++l;\n }\n if (l == r) break;\n if (!(r & 1)) {\n _updateLazy(r, val);\n lazy[r] = op(lazy[r], val);\n --r;\n }\n }\n // final node (when l == r)\n _updateLazy(l, val);\n lazy[l] = op(lazy[l], val);\n}\n\nvoid _updateLazy(int idx, T val) {\n // convert per-element val to total for node idx,\n // then add that total into tree[idx] and all ancestors\n T total = value(idx, val); // val * node_length\n for (; idx; idx >>= 1) tree[idx] = op(tree[idx], total);\n}\n```\n\n**Why update ancestors?**\nBecause `lazy[idx]` records a per-element increment for `idx` so we don't push it to children. But queries that use ancestor nodes must see the node sums updated. By adding the `total` to `tree[idx]` and all ancestors, we keep `tree[parent]` consistent.\n\n**`value(idx, val)`** converts a per-element increment `val` into the total sum over the node:\n\n```cpp\nT value(int idx, T val) { return val * (rng[idx].second - rng[idx].first + 1); }\n```\n\n---\n\n# 4. Range query (how it works)\n\n\n\n**Public API:** `query(l, r)` with `0 <= l <= r < n`. Internally `l += n; r += n;` and we run `_queryTree(l, r)`.\n\n**High-level idea:**\n\n- As with updates, iterate bottom-up picking the minimal nodes that cover `[l, r]`.\n- For each chosen node `idx`, the sum contribution is:\n\n - `tree[idx]` (which already contains updates applied *at* that node), **plus**\n - contributions from **ancestor** `lazy` values that apply to `idx` but were never pushed down to `idx` (those are collected with `_climbLazy` and converted to totals with `value`).\n\n**Why climb ancestors?**\n`_updateLazy` updates `tree` for the node\u2019s total but *does not* push that node\u2019s `lazy` value down to children. If we visit a descendant node later, its `tree[desc]` will **not** include lazy values from its ancestors. `_climbLazy(desc)` aggregates `lazy` values from all ancestors so we can add their effect for the descendant node.\n\n**Core query routines**\n\n```cpp\nT query(int l, int r) { return _queryTree(l + n, r + n); }\n\nT _queryTree(int l, int r, T t = def) {\n for (; l < r; l >>= 1, r >>= 1) {\n if (l & 1) {\n t = op(t, value(l, _climbLazy(l)), tree[l]);\n ++l;\n }\n if (l == r) break;\n if (!(r & 1)) {\n t = op(t, value(r, _climbLazy(r)), tree[r]);\n --r;\n }\n }\n return op(t, value(l, _climbLazy(l)), tree[l]);\n}\n\nT _climbLazy(int idx, T cnt = def) {\n for (idx >>= 1; idx; idx >>= 1) cnt = op(cnt, lazy[idx]);\n return cnt;\n}\n```\n\n- `tree[idx]` already includes contributions from updates that were targeted at node `idx` directly.\n- `value(idx, _climbLazy(idx))` computes totals produced by lazies on **ancestors** of `idx`.\n- `op(a,b,c)` is provided as `a + (b + c)` (i.e., sum combination).\n\n---\n\n# 5. API interface\n\nPublic methods and how to use them:\n\n```cpp\nsegtree<int64_t> st(n); // create segment tree for n elements (all zeros initially)\nst.update(l, r, val); // add `val` to every element in [l, r] (inclusive), 0-indexed\nauto s = st.query(l, r); // returns the sum of elements in [l, r] (inclusive)\n```\n\nDetails & contracts:\n\n- Inputs `l` and `r` are **inclusive** and **0-indexed**.\n- `T` must support `+`, `*` with `int` lengths (or equivalent) and a zero default (`def`) \u2014 commonly `long long` is used for sums.\n- `update` and `query` expect `0 <= l <= r < n`.\n- `tree` and `lazy` are internal; do not modify them externally.\n\n---\n\n# 6. Time and space complexity\n\n**Space:** `O(n)` memory using arrays of size `2*n`:\n\n- `tree` length `2*n`\n- `lazy` length `2*n`\n- `rng` length `2*n`\n\n**Time per operation (practical / average):**\n\n- Each `update` or `query` visits `O(log n)` nodes (the set of nodes partitioning the interval).\n- However, this implementation performs an **ancestor walk** (`_updateLazy` or `_climbLazy`) for each visited node:\n\n - `_updateLazy` updates all ancestors (an `O(log n)` walk) whenever a chosen node is updated, and\n - `_climbLazy` walks upward to aggregate lazies for each visited node in a query (another `O(log n)` each).\n- Therefore **worst-case** time per update or query can be `O((log n)^2)` in the current code.\n\n**How to get strict `O(log n)` worst-case:**\n\n- Use an iterative **push/pull** approach:\n\n - `push` lazies down along the path from root to the two target leaves before performing your operation, so per-node `_climbLazy` becomes unnecessary.\n - After modifying leaves, `pull` (recompute) parents upward once.\n- That pattern is a standard iterative lazy tree optimization and yields `O(log n)` worst-case per operation. If you want, I can produce that optimized implementation.\n- Downside to this approach is that it takes significantly more time to implement when not needed.\n\n---\n\n# 7. Usage\n\n\n\nBelow are simple examples and expected results.\n\n**Example 1 \u2014 small walkthrough**\n\n```cpp\n// Create tree over n = 8 elements, all initially 0\nsegtree<long long> st(8);\n\n// Add 3 to indices [2, 5]\nst.update(2, 5, 3);\n\n// Queries:\nauto total = st.query(0, 7); // sum over entire array\nauto partial = st.query(2, 3);\n\n// Expected values:\n// indices 2,3,4,5 each increased by 3 -> 4 elements * 3 = 12\n// total == 12\n// partial (2..3) == 3 + 3 = 6\n```\n\n**Example 2 \u2014 sequence of updates**\n\n```cpp\nsegtree<long long> st(6);\nst.update(0, 2, 5); // array: [5,5,5,0,0,0]\nst.update(1, 4, 2); // array: [5,7,7,2,2,0]\n\nst.query(0, 5); // expected 5 + 7 + 7 + 2 + 2 + 0 = 23\nst.query(2, 3); // expected 7 + 2 = 9\n```\n\n---\n\n# 8. Notes, caveats & suggestions\n\n- **Undefined behavior fix:** the original compact form used `lazy[l++] = op(lazy[l], val);` \u2014 that\u2019s undefined in C++ and must be split into separate operations (`lazy[l] = op(...); ++l;`).\n- **Type `T` constraints:** `T` must behave like a numeric type supporting `+` and `*` by integer lengths. Use `long long` (or `int64_t`) if sums could be large.\n- **Performance tradeoff:** the current code is clear and compact, and in many practical cases it runs fast; for guaranteed worst-case `O(log n)` operations, the iterative push/pull pattern is preferred.\n- **Intervals:** the code uses inclusive intervals `[l, r]` (common for competitive programming). If you prefer half-open `[l, r)` semantics, the interface and some loop conditions simplify; I can convert it on request.\n- **Testing:** test edge cases (small `n` like `n=1`, updates where `l==r`, and non-power-of-two `n`) \u2014 this implementation\u2019s `rng` logic handles non-power-of-two `n` correctly.\n\n---\n\n*Written by BooleanCube :]*\n",

"toc": [

{

"level": 1,

"id": "1-storage",

"title": "1. Storage"

},

{

"level": 1,

"id": "2-construction",

"title": "2. Construction"

},

{

"level": 1,

"id": "3-range-update-how-it-works",

"title": "3. Range update (how it works)"

},

{

"level": 1,

"id": "4-range-query-how-it-works",

"title": "4. Range query (how it works)"

},

{

"level": 1,

"id": "5-api-interface",

"title": "5. API interface"

},

{

"level": 1,

"id": "6-time-and-space-complexity",

"title": "6. Time and space complexity"

},

{

"level": 1,

"id": "7-usage",

"title": "7. Usage"

},

{

"level": 1,

"id": "8-notes-caveats-suggestions",

"title": "8. Notes, caveats & suggestions"

}

]

},

{

"title": "Zero to One #1: Physics Behind Devices",

"summary": "Join me on my journey from to learn everything about computers, starting with electricity and semiconductor devices.",

"tags": [

"quant",

"computer",

"physics",

"design"

],

"date": 1766498202000,

"cover": "https://i.imgur.com/bPM1QNH.png",

"hidden": false,

"slug": "device-physics",

"content": "This journey takes us \"From Zero to One,\" starting with the simplest building blocks of 1s and 0s and culminating in a functioning computer.\nOne of the characteristics that separates an engineer or computer scientist from a layperson is a systematic approach to managing complexity.\nModern digital systems are built from millions or billions of transistors. No human being could understand these systems by writing equations describing the movement of electrons in each transistor and solving all of the equations simultaneously.\nTo truly understand how a microprocessor is created, you will need to learn to manage complexity using two systematic principles: abstraction and discipline.\n\n**Abstraction** is a technique that hides details that aren't important. A system can be viewed from many different levels of abstraction.\n\nVarious levels of abstraction for an electronic computing system along with the typical building blocks at each level:\n\n| Application Software | Operating Systems | Architecture | Microarchitecture | Logic | Digital Circuits | Analog Circuits | Devices | Physics |\n|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|:---:|\n| Programs | Device Drivers | Instructions, Registers | Datapaths, Controllers | Adders, Memories | AND gates, NOT gates | Amplifiers, Filters | Transistors, Diodes | Electrons |\n\nAt the lowest level of abstraction is the *physics*, the motion of electrons. The behavior of electrons is described by quantum mechanics and Maxwell's equations.\nOur system is constructed from electronic *devices* such as transistors (or vacuum tubes, once upon a time).\nThese devices have well-defined connection points called terminals and can be modeled by the relationship between voltage and current as measured at each terminal.\nBy abstracting to this level, we can ignore the individual electrons.\nThe next level of abstraction is *analog circuits*, in which devices are assembled to create components such as amplifiers.\nAnalog circuits input and output a continuous range of voltages.\n*Digital circuits* such as logic gates restrict the voltages to discrete ranges, which we will use to indicate 0 or 1.\nIn *logic* design, we build more complex structures, such as adders or memories, from digital circuits.\n\n*Microarchitecture* links the logic and architecture levels of abstraction.\nThe *architecture* level of abstraction describes a computer from the programmer's perspective.\nFor example, the Intel IA-32 architecture used by microprocessors in most personal computers (PCs) is defined by a set of instructions and registers (memory for temporarily storing variables) that the programmer is allowed to use.\nMicroarchitecture involves combining logic elements to execute the instructions defined by the architecture.\nA particular architecture can be implemented by one of many different microarchitectures with different price/performance/power trade-offs.\nFor example, the Intel Core 2 Duo, the Intel 80486, and the AMD Athlon all implement the IA-32 architecture with different microarchitectures.\n\nMoving into the software realm, the *operating system* handles low-level details such as accessing a hard drive or managing memory.\nFinally, the *application software* uses these facilities provided by the operating system to solve a problem for the user.\nThanks to the power of abstraction, we can surf the web without any regard for the quantum vibrations of electrons of the organization of memory in the computer.\n\nIn here, I want to focus on the lower levels of abstraction of **physics** and **devices**. When you are working at one level of abstraction, it is good to know something about the levels directly above and below it. For example, a computer scientist cannot fully optimize code without understanding the architecture for which the program is being written.\n\n**Discipline** is the act of intentionally restricting your design choices so that you can work more productively at a higher level of abstraction.\nDigital circuits use discrete voltages, whereas analog circuits use continuous voltages.\nTherefore digital circuits are a subset of analog circuits and in some sense must be capable of less than the broader class of analog circuits.\nHowever, digital circuits are much simpler to design.\nBy limiting ourselves to digital circuits, we can easily combine components into sophisticated systems that ultimately outperform those built from analog components in many applications.\nLike how, digital televisions and cell phones are replacing their analog predecessors.\n\n---\n\n# 1. Physics Behind Electricity\n\nWhen beginning to explore the world of computers, it is vital to start by understanding the basics of electricity and charge.\nBefore we can dive deeper into higher layers of abstraction, we must ensure a deep understanding of what electrical charge is, and how we measure it with voltage, current, and resistance.\nI won't cover most basic physics topics in here but will briefly explain what I learned about electricity and fix common misconceptions about the physics of electrons.\n\nElectrical charge is a basic property of subatomic particles that occurs when electrons are transferred from one particle to another.\nAn object becomes charged when it gains or loses electrons, creating an imbalance.\nCharged objects exert electromagnetic forces (attraction and repulsion) on each other, and the movement of charge is called electric current.\n\nIn short, voltage is the electrical 'pressure' that pushes electrical charges (electrons) to flow through a circuit, current is the rate at which charge is flowing, and resistance is a material's tendency to resist the flow of charge (current).\n\nHowever, while these definitions provide a basic understanding of how we measure electricity, I wanted to deeply understand the intuition behind what causes electricity to flow in a circuit.\nWe define voltage as the difference in charge (electrons) between two points in a circuit.\nThis difference in potential puts the electrons under pressure to flow in the direction of current. Without voltage there is no current.\n\nI want to use a common analogy, a water tank, to help visualize this better.\nIn this analogy, charge is represented by the water amount, voltage is represented by the water pressure, and current is represented by the water flow.\n\n<div align=\"center\">\n\n\n\n*This is a water tank at a certain height above the ground. At the bottom of this tank there is a hose.*\n\n</div>\n\nThe pressure at the end of the hose can represent voltage. The water in the tank represents charge.\nThe more water in the tank, the higher the charge, the more pressure is measured at the end of the hose.\n\nWe can think of this tank as a battery, a place where we store a certain amount of energy and then release it.\nIf we drain our tank a certain amount, the pressure created at the end of the hose goes down.\nWe can think of this a as a decreasing voltage, like when a flashlight gets dimmer as the batteries run down.\nThere is also a decrease in the amount of water that will flow through the hose.\nLess pressure means less water (current) is flowing.\n\nBatteries are designed to provide a specific amount of voltage, and while bulbs itself don't have voltage, they require a specific voltage (electrical pressure) to work.\nRather, voltage is supplied to it from the electrical system for it to function, converting that electrical energy into light and heat.\nVoltage is the \"push\" that makes current flow through the bulb's filament or LED, causing it to light up.\n\n## Voltage Intuition\n\nI feel like even with these definitions of voltage as a potential difference, it is not very intuitive how exactly it works within circuits.\nAfter many many days of searching for answers I found some analogies that finally make sense and I wanted to share.\n\nIn a standard single-loop circuit (like a series of Christmas lights), voltage is \"spent\" as current flows through resistive components.\nIn the real world, even the copper wire acts as a resistor, though super weak.\nWhile we often pretend wires are perfect conductors in circuit diagrams (0 Ohms), physically, copper is not a super conductor.\nIt resists the flow of electrons slightly, which causes a slight loss of energy (voltage) as the current travels from one end to the other.\nCopper atoms vibrate, and in doing so collide with moving electrons. Every collision loses a bit of energy to heat or other forms. This loss of energy manifests a slight Voltage drop.\nIf you run electricity through a long distance wire, the resistance can add up over time, and reduce the voltage received on the other end.\n\nI want to use a ski slope as an analogy to explain how voltage works in a single-loop circuit.\nImagine the battery as a ski lift taking you to the top of the mountain (10V).\nEvery resistor or light bulb represents a slope, and as you ski down a slope, you lose height.\nAt the very top of the mountain, let's say you are at 10V. After the first resistor, you could be measured at 7V. After the second resistor, you might be at 3V.\nAnd at the bottom of the mountain, you are measured at 0V.\nIn this analogy, voltage represents the potential gravitational energy (height difference between you and the **ground**).\nThis concept of **GND** (ground) can also be commonly found within most circuit diagrams and it represents the point of lowest voltage in the entire circuit (0 V, end of the flow of current).\n\nWhen a wire splits into two or more branches, the voltage across each branch is the exact same.\nAll branches connect to the same split point, and reunion point, therefore the potential difference must be the identical.\nThink of it as a river flowing downhill. The stream hits an island and splits into 2 streams: Stream A is wide and clear, Stream B is narrow and rocky.\nThe river splits into 2 streams that both start at the same height and both streams merge again at the same height too.\nUsing this analogy, the drop in height (voltage) for both streams should be equal since they have the same starting and finishing elevation, even though one stream might carry much more water (current).\nNo matter how many slopes (resistors) a stream (parallel path) may encounter on the hill, it will drop the same height (voltage) as the other stream (other parallel path).\n\nUsing height to visualize voltage and gravity to visualize the pressure of voltage, made voltage extremely more intuitive for me.\n\n## Pulling Voltage (Visualizing Current)\n\nThis is a short one, but I wanted to briefly go over this because it is important to remember.\nNow, throughout this article voltage is described as the force that \"pushes\" electrons (or electrical charges) throughout the circuit.\nWhile this is actually an accurate definition for voltage, I don't like it very much because it makes visualizing voltage much more unintuitive.\nI would like to clarify a common misconception this creates when it comes to the flow of electrons.\nThis definition makes people commonly visualize the flow of electrons in a circuit as being pushed out of a power source, but that is actually not how it works.\nA better approach to visualize it is: to see the electrons being pulled by the ground from the power source (the other way around), like tug of war between the electrons.\nThis works well with the gravitational force analogy for voltage as well since gravity is a force that pulls (not pushes).\nThis is more intuitive when explaining why current doesn't flow in open circuits and explaining other mistakes where current is misinterpreted/misused.\n\nI'm guessing that is why current is conventionally shown flowing from the positive terminal to the negative terminal of a battery even though the flow of electrons is in the opposite direction.\nThat definitely makes it much easier to visualize electron flow.\nAlso conventionally, the positive terminal of a battery actually represents a high voltage and the negative terminal represents ground.\nThis might seem unintuitive at first because the positive terminal actually feels the least electrical pressure (voltage definition) to move electrons.\nWhen a point has a higher voltage than another point in a circuit, it basically means that it is more positive (has less electrical charge).\nThis works well with the pulling perspective of voltage, since a point of higher voltage has a stronger pull on electrons than a point of lower voltage.\n\nSo please keep this in mind throughout the article and whenever you think about current visually.\n\n## Ohm's Law\n\nTo further explain the relationship between voltage, current, and resistance:\n\n<div align=\"center\">\n\n\n\n</div>\n\nIf we increase the amount of water (charge) in the right tank, we increase the pressure (voltage) on the water (charge) from gravitational forces (electromagnetic forces).\nIn turn, even though the hose is narrower (more resistance) on the right side than the left, we still see an equal amount of water flowing (current) out of the right pipe.\nIf we increased the amount of water (charge) in the left tank, we increase the pressure (voltage), which increases the amount of water flowing (current) through the left pipe.\n\n- Water = Charge (measured in Coulombs)\n- Pressure = Voltage (measured in Volts)\n- Flow = Current (measured in Amperes, or \"Amps\" for short)\n- Hose Width = Resistance (measured in Ohms)\n\nAs explained by the analogy, Ohm combined the elements of voltage, current, and resistance to develop the formula of Ohm's Law: `V = IR`.\n`V` = Voltage in Volts, `I` = Current in Amps, and `R` = Resistance in Ohms.\n\n---\n\n# 2. Device Components\n\nBefore diving into circuits, it is helpful to understand the context of **device components** within **digital circuits**.\nWhile most physical variables in the real world (voltage, frequency, or position) are continuous (analog), digital systems abstract this information into discrete-valued variables.\nSpecifically, electronic computers generally use a binary representation, where a high enough voltage indicates a `1 (TRUE)` and a low enough voltage indicates a `0 (FALSE)`.\n\nThis digital abstraction allows designers to focus on the logical manipulation of 1s and 0s without constantly solving complex physics equations describing the motion of electrons in every component.\nDigital circuits are the physical implementations of this logic, restricting voltages to discrete ranges to represent binary states.\n\n## CMOS Transistors\n\nModern digital circuits are primarily built using **transistors**, which act as electronically controlled switches that turn ON and OFF when voltage or current is applied to a control terminal.\nThe two main types of transistors are *bipolar transistors* and *metal-oxide-semiconductor field effect transistors* (MOSFETs or MOS transistors, pronounced \"moss-fets\" or \"M-O-S\", respectively).\nThe specific technology used for the vast majority of chips today is known as CMOS (Complementary MOS).\nTo understand how these switches work, we must look at the underlying materials and components: semiconductors, diodes, and capacitors.\n\n### Semiconductors\n\n<div align=\"center\">\n\n\n\n</div>\n\nAn atom contains a nucleus of protons, surrounded by several orbital shells that contain a maximum amount of electrons.\nThe outermost orbital shell called the valence shell holds electrons with the most energy.\nThe electrons are held in place by the nucleus, however there's another shell called the conduction band.\nIf an electron can reach it, it can break free from the valence shell and move to another atom.\nWith a metal conductor like copper, the conduction band and valence shell overlap so it is very easy for the electron in the valence shell to move around freely.\nInsulators, have a valence shell which is packed and the conduction band is too far away to conduct electricity.\nIn **semiconductors**, there is one too many electrons in the valence shell (4 electrons) so they act as insulators.\nBut since the conduction band is close, if we provide some external energy, some electrons can gain enough energy to reach the conduction band and break free of the atom.\n\nBasically, a semiconductor materials ability to conduct electricity can be precisely controlled by adding impurities (doping) or applying voltage, light, or heat.\nThese semiconductor materials form the basis for transistors, diodes, and microchips that power modern electronics.\n\nCMOS technology relies on Silicon (Si), a group IV atom (so it has four electrons in its valence shell and forms bonds with four adjacent atoms) that forms a crystalline lattice.\nPure silicon turns out to be a poor conductor, so engineers add impurities called **dopants** to alter its conductivity.\nAdding Arsenic (group V) creates **n-type** silicon, which has free negatively charged electrons that act like negative charge carriers.\nAdding Boron (group III) creates **p-type** silicon, which has \"holes\" (missing electrons) that act like positive charge carriers.\n\n<div align=\"center\">\n\n\n\n</div>\n\n### Diodes\n\nA **diode** is a semiconductor device, typically made of doped silicon, that essentially acts as a one-way switch for current.\nIt allows current to flow easily in one direction but severely restricts current from flowing in the opposite direction.\n\nI want to go on a slight tangent here real fast and explain the types of current as it is relevant to diodes and transistors.\nDC (Direct Current) flows steadily in one single direction (like from a battery), while AC (Alternating Current) periodically reverses direction, flowing back and forth in cycles (like wall outlets).\nAC is ideal for power grids because its voltage can be easily changed with transformers for efficient long-distance transmission, while DC is used by most electronics and batteries, often requiring conversion from AC.\nIf you were curious, power grids use generators and transformers that rotate magnets to precisely control voltage levels that push electrons back and forth.\n\"But how exactly?\" is beyond the scope of this study, and I will not be covering it here.\n\n<div align=\"center\">\n\n\n\n</div>\n\nBack to topic. When n-type and p-type silicon are joined, they form a **diode**. A diode acts as a one-way valve for current.\nThe p-type region is called the anode and the n-type region is called the cathode.\nWhen the voltage on the anode rises above the voltage on the cathode, the diode is forward biased, and current flows through the diode from the anode to the cathode.\nBut when the anode voltage is lower than the voltage on the cathode, the diode is reverse biased, and no current flows.\n\nThe moment you join p-type and n-type material, nature tries to reach an equilibrium through a process called **diffusion**.\nDiffusion is simply the process of the high-concentration electrons from the n-type region rushing across the border to fill the \"empty holes\" in the p-type region.\nWhen an electron meets a hole at the junction, they cancel each other out and stick in place.\n\nOn the n-side, you have atoms like Phosphorus with 5 valence electrons. Since they only need 4 electrons to bond, that 5th electron is free to roam.\nHowever, the phosphorus atom is still electrically neutral because it has 15 protons and 15 electrons.\nOn the p-side, you have atoms like Boron with 3 valence electrons. They are also electrically neutral with 5 protons and 5 electrons, but they leave a \"hole\" in the lattice.\n\nWhen the junction is formed, those tightly packed free electrons from the n-side jump across to fill the holes in the p-side.\nAs a result, the Phosphorus atom on the n-side just lost an electron making it a positive ion and the Boron atom on the p-side just gained an electron making it a negative ion.\nBecause these atoms are locked into the solid crystal lattice, they cannot move.\nYou are left with a layer of stationary positive charge near the junction on the n-side and a layer of stationary negative charge near the junction on the p-side.\n\n<div align=\"center\">\n\n<img src=\"https://i.imgur.com/cWB9QSH.png\" alt=\"PN Junction Diagram\" width=600 />\n\n*PN junction showing the depletion region with stationary ions, free electrons, and holes.*\n\n</div>\n\nThis creates something called the **depletion region** at the PN junction, which contains no mobile charge carriers and only stationary charged ions.\nIn physics, whenever you have a separation of positive and negative charges that are fixed in space, an **electric field** is automatically created, and such is the case here.\nThese stationary ions near the junction create an internal electric field within the depletion region that acts like a wall, stopping any further electrons from crossing.\nThe internal electric field applies a force that repels negative charges from the n-side and repels positive charges from the p-side.\nI know, the diagram above looks SO misleading and it honestly is extremely unintuitive.\nThe negative and positive symbols within the depletion region represent the charge of the ions, and the other symbols in the n-side and p-side represent free electrons and holes. Two very different things.\nThe electric field suggests that positive charges experience a force that pushes them towards the negatively charged p-side and negative charges experience a push towards the positively charged n-side.\nThat is what the diagram actually means.\n\nBasically, this electric field creates a **potential barrier** which is simply the voltage equivalent of that field.\nFor electrons to cross the junction, a voltage bigger than the potential barrier needs to be applied.\nThink of it like: the electric field is the steepness of the slope, and the voltage is the height of the hill.\n\nYou might be wondering: \"why don't all the electrons just cross over until all holes are filled?\"\nReally, it's a balance of the two forces we talked about: diffusion and drift.\nThe natural tendency for electrons to cross over to fill the holes in the p-side (diffusion) and the repulsive force from the potential barrier (drift).\nThe depletion region stops growing the exact moment the electric field becomes strong enough to counteract the force of diffusion.\nThis state is known as equilibrium.\n\nBefore I dive into forward and reverse bias of a diode, I strongly recommend you to refresh your memory of the current intuition I explained earlier and really ingrain that pulling perspective of current.\nWhen you apply a **forward bias** to a diode, you connect the positive terminal (high voltage) to the p-side and the negative terminal (or ground) to the n-side.\nIn turn, electrons are pulled away from the p-side (pushed into n-side), breaking the equilibrium, overcoming the voltage produced by the potential barrier, squashing the depletion region and allowing current to flow through.\nHowever, when you apply a **reverse bias**, you connect the positive terminal (high voltage) to the n-side and the negative terminal (or ground) to the p-side.\nThis results in electrons being pulled away from the n-side junction, and in consequence pushes holes away from the p-side junction (widening the depletion region).\nSince the free electrons (negative charge carriers) are pulled away from the junction, and empty holes (positive charge carriers) are pushed away from the junction, we are left with a wider depletion region at the junction of the diode filled with ions which strengthen the electric field and potential barrier even more.\nSo, a reverse biased diode acts as a strong insulator with very microscopic leakage of current.\n\nQuick tangent to make sure you understand!\nIn forward biased diodes, we can say \"electrons are pulled from the p-side and pushed into the n-side of the diodes\" because current flows through diodes in forward bias.\nHowever, it is not the case for reverse biased diodes. In reverse biased diodes, electrons feel a pulling force from the n-side which attract the electrons away from the junction.\nThis causes holes to be pushed away from the junction as well which causes the widening of the depletion region, blocking current.\nHowever, there are no electrons being pushed into the p-side of the diode because the electron tug of war ends at the PN junction of the diode.\nRevisit my notes on visualizing current (find in table of contents) if you don't understand what I am trying to get at here.\n\nAll of that combined together should intuitively explain the exact science behind how diodes act as one-way valves for current.\nHere is a quick [video](https://youtu.be/Fwj_d3uO5g8) to help visualize and understand the physics behind a diode.\n\nQuick note! Diodes don't always allow current to flow in forward bias though.\nA silicon diode requires a specific minimum voltage (known as the forward voltage drop ~ 0.6-1.0 V) to overcome its potential barrier before conducting electricity, after which current increases exponentially.\nEven though diodes block current with reverse bias, if the reverse voltage is high enough (exceeding the diode's reverse breakdown voltage), it will conduct, but this usually causes permanent failure.\n\n<div align=\"center\">\n\n\n\n*The diode symbol intuitively shows that current only flows in one direction.*\n\n</div>\n\n### Capacitors\n\nA **capacitor** is an electrical circuit component that temporarily lets current flow through and temporarily stores electrical energy (like a battery).\nIt contains two conductive plates separated by an insulating dielectric.\nA dielectric is an electrical insulator (glass, ceramic, plastic, etc) that supports an electrical field by becoming polarized, meaning its charges shift slightly but doesn't allow for current to flow.\nThis layer is essential, as it allows a voltage to develop across the plates by holding an electric charge instead of letting current flow between them.\n\nIn simple terms, the capacitance of a capacitor is the measure of capacitor's ability to store electrical charge onto its plates.\nThe ratio of stored charge `Q` to the applied voltage `V` gives the capacitance `C`: `C = Q / V`.\nIt is slightly unintuitive at first, but once you realize that charge and voltage are completely separate, capacitance starts to make more sense.\nThink of it like: a bucket's size (Capacitance) is independent of how much water (Charge) is in it or how much pressure (Voltage) is at the bottom.\nWhen a voltage `V` is applied to one of the conductors, the conductor accumulates electric charge `Q` and the other conductor accumulates the opposite charge `-Q`.\nWhile we often describe the charge as being held on the plates, the energy is more accurately stored in the electric field between them.\nAs current flows into the capacitor, the field strengthens, and as it discharges, the field weakens, releasing the stored energy back into the circuit.\n\nCapacitance, measured in Farads (F), depends on several factors: the area of the conductive plates, the thickness and type of dielectric material, and the separation distance between the plates.\nA larger plate area or smaller distance gap between them increases capacitance, allowing the capacitor to store more charge at a given voltage.\nTo show how these variables are related, you can also measure the capacitance of a capacitor using this formula: `C = \ud835\udf00 * (A / d)`.\n`\ud835\udf00` represents the permittivity (ability to store electrical energy in an electric field) of the dielectric material, `A` represents the area of the conductive plate, and `d` represents the distance between them.\n1 Farad means the capacitor can hold 1 Coulomb of charge across a potential difference of 1 Volt.\nYou will more commonly see capacitors measured in pico-farads (pF), nano-farads (nF) or micro-farads (\u03bcF).\n\nWhen a voltage is applied and electrons gather on a plate, the dielectric becomes positively charged near the negatively charged plate and vice versa. This process is called polarization.\nThe dielectric is influenced by the surrounding conductive plates to have a shift in its electron cloud which creates a tiny internal electric field.\nThe two oppositely charged conductive plates separated by the dielectric also begin to form a larger external electric field.\nThe dielectric not only helps as an insulator between the plates but also uses its internal field to oppose the external field of the plates.\nIt's important to note that this is an intended feature of the dielectric because it helps slow the growth of the opposing voltage from the external field, which in turn increases the capacitance of the capacitor.\nThe external electric field is strong enough to hold the charges in these plates until connected to another circuit to discharge the capacitor.\n\n<div align=\"center\">\n\n\n\n</div>\n\nBefore I begin explaining how charging and discharging a capacitor works, I want to explain how circuits actually work with capacitors since they are physically open circuits.\nBoth conductive plates within the capacitor have a bunch of electrons randomly roaming around much like the copper wire of any circuit.\nInitially, when the capacitor is fully discharged, the plates are both electrically neutral and no energy or charge is stored yet.\nOnce you start charging the capacitor, electrons start to build up on one of the plates, drawing positive charge from the dielectric toward it and pushing the negative charge within the dielectric away.\nThis forms an electrical field around the capacitor which begins to push the electrons of the other plate out.\nThis creates the illusion of current \"flowing through\" the capacitor even though there are no electrons passing through the capacitor because of the insulator between the plates.\nIt is important to note that positive charges (protons) don't move since all the atoms are tethered in the solid dielectric, it is just the shifting electron clouds that create dipoles in the dielectric.\n\n<div align=\"center\">\n\n\n\n*RC circuit: a capacitor (C) in series with a resistor (R), connected to a battery (V_s) through a switch.*\n\n</div>\n\nThe circuit above contains a capacitor (`C`) in series with a resistor (`R`), both connected to a battery power supply (`V_s`) through a mechanical switch.\nAt the instant the switch is closed, the capacitor starts charging up through the resistor.\nThis charging process continues until the capacitor's voltage is equal to the battery supply's voltage.\n\n<div align=\"center\">\n\n\n\n*Capacitor voltage (V_c) approaching supply voltage (V_s) over time during charging.*\n\n</div>\n\nAs the capacitor starts charging, charge builds up on its plates, creating an increasing voltage `V_c` that opposes the battery voltage `V_s`.\nThis opposition reduces the current slowly as the voltage `V_c` approaches `V_s`, resulting in exponential decrease in current over time.\n\nThis charging behavior follows a time constant represented by: `\ud835\udf0f = RC`.\n`R` represents the resistance of the circuit in Ohms, and `C` represents the capacitance of the circuit in Farads.\nThe time constant `\ud835\udf0f` represents the time required for the capacitor to reach ~63% of its full charge potential.\nThe value of `\ud835\udf0f` depends on the resistance `R` and capacitance `C`: a larger `R` slows the charging rate, while a larger `C` allows the capacitor to hold more charge, also requiring more time to reach full charge.\n\nAs time progresses, the voltage across the capacitor follows an exponential curve, increasing quickly at first but slowly as it approaches `V_s`.\nAt around `5\ud835\udf0f`, the capacitor voltage `V_c` has essentially reached `V_s`, and we consider it fully charged.\nAt this point, known as the Steady State Period, the capacitor behaves like an open circuit, holding the fully supply of voltage across it, while current falls to 0, and the total charge reaches `Q = CV`.\nThe stored charge will forever stay in the capacitor and won't be lost until connected to another circuit. However, capacitors do leak charge in practice.\nNote that theoretically, the capacitor never actually reaches 100% of its full charging potential.\nEven after `5\ud835\udf0f`, the capacitor only reaches 99.3%, but for all practical purposes, we can consider that capacitor fully charged at this point, as there is hardly any change after this.\n\n<div align=\"center\">\n\n\n\n*A capacitor and bulb in parallel \u2014 when the switch opens, the capacitor discharges to keep the bulb lit.*\n\n</div>\n\nNow, take the circuit above with a capacitor and a bulb connected in parallel powered by a battery power supply through a mechanical switch.\nWhen we close the switch, current flows through the circuit and charges up the capacitor and lights the bulb in parallel.\nIf we let the capacitor charge for a while and then open the switch, you can see that the bulb actually stays lit since the capacitor immediately starts discharging and releases its charge back into the circuit.\nThe bulb will stay lit until the capacitor is done discharging, meaning it is back to its default state and the plates have an equal charge again.\nIf we mimic a pulsating DC by repeatedly flipping the mechanical switch of the circuit, the bulb will stay lit all the time because it is being powered by the battery when the switch is closed and being powered by the capacitor when the switch is open.\nThis demonstrates how a capacitor can smoothen out the ripples that can appear while converting AC to DC.\n\nAdditionally, while a capacitor is placed in a DC circuit, it charges up to match the supply voltage, and once charged, it effectively blocks the flow of current.\nIn an AC circuit however, the capacitor behaves differently.\nSince AC consistently changes direction, the capacitor repeatedly charges and discharges, creating an effect that lets AC current \"pass through\" the capacitor.\n\nHere is a quick [video](https://youtu.be/X4EUwTwZ110) that visually explains how electricity \"flows through\" the capacitor.\n\n### Current Rectification\n\nOur homes are powered by power grids that provide an AC to our wall outlets.\nAnd our electronic devices need to convert the AC from these outlets to DC because most sensitive electronics (computers, phones, etc.) run on a steady, one-way flow of electrons (DC), not the fluctuating AC waveform.\nOur devices convert AC to DC using a process called **rectification** typically involving: a transformer to adjust the voltage, a rectifier circuit (diodes) to change AC to pulsating DC, a capacitor to smooth the ripples, and a voltage regulator to provide a steady, constant output for electronics.\n\n<div align=\"center\">\n\n<img src=\"https://i.imgur.com/GJHft6R.png\" alt=\"Full-Wave Rectifier Circuit\" width=\"400\" />\n\n*Diagram of a circuit that shows how a full-wave rectifier is constructed.*\n\n</div>\n\nThis is a breakdown of all the steps in the rectification process:\n\n1. **Step-Down Transformer**: The high AC voltage from the wall outlet is reduced to a lower, more manageable AC voltage level for the device.\n2. **Rectifier (Diodes)**: Diodes allow current to flow in only one direction.\n - **Half-wave rectification**: Blocks the negative half of the AC wave, resulting in a pulsating DC.\n - **Full-wave rectification**: Uses four diodes to flip the negative half of the wave, making it positive, creating a smoother, but still bumpy, DC output. Check the figure above to visualize the circuit.\n3. **Filter (Capacitor)**: A capacitor charges up during the peaks of the pulsating DC and discharges during the dips, smoothing out the ripples and creating a steadier DC.\n4. **Voltage Regulator**: The regulator ensures a precise, constant DC voltage by compensating for any remaining fluctuations, providing the stable power needed for sensitive electronics.\n\n## nMOS vs pMOS\n\nMOSFETs are kind of like sandwiches that consist of layers of conductive and insulating materials. These MOSFETs are built on thin and flat **wafers** like most modern electronics.\nA wafer is a thin slice of semiconductor material, typically high-purity crystalline silicon, used as the substrate for fabricating integrating circuits (chips) in electronics.\nThese thin, usually circular discs (15 - 30 cm in diameter) serve as the foundational base upon which microelectronic devices are manufactured through processes like doping, etching, and deposition.\n\nThe manufacturing process of a MOSFET obviously begins with a bare wafer, and then involves a sequence of steps in which dopants are implanted into the silicon, thin films of silicon dioxide and silicon are grown, and metal is deposited.\nBetween each step, the wafer is carefully and precisely patterned by very accurate and advanced laser technology so that the materials appear exactly where they are desired.\nSince transistors are literally a fraction of a micron (1e-6 m) in length, an entire wafer is processed at once.\nOnce the processing is complete, the wafer is cut into tiny rectangles called **chips** containing millions or billions of transistors.\nThese chips are first tested, and then placed in a plastic or ceramic package with metal pins on the bottom to connect them to circuit boards.\n\n<div align=\"center\">\n\n<img src=\"https://i.imgur.com/mTtlJpJ.png\" alt=\"Silicon Wafer\" width=\"400\" />\n\n*An example of a silicon wafer body and the chips that it gets broken into.*\n\n</div>\n\nSpecifically, MOSFET sandwiches consist of 3 main layers: a conductive layer on the top called the gate, an insulating dielectric layer of silicon dioxide (`SiO_2`) in the middle, and the silicon wafer called the substrate on the bottom.\nIf you were wondering, silicon dioxide is basically just glass and also often simply called *oxide* in the semiconductor industry.\nHistorically, the gate was constructed from metal, hence the name metal-oxide-semiconductor, however, modern manufacturing processes use polycrystalline silicon for the gate because it doesn't melt during some of the following high-temperature processing steps.\nThe gate acts as the switch that stops and allows current to flow through the MOSFET when there is a voltage applied across the source and drain.\n\n<div align=\"center\">\n\n\n\n*Cross-sectional view of nMOS (left) and pMOS (right) transistors showing the source, gate, and drain terminals.*\n\n</div>\n\nAs shown by the figure above, there are two flavors of MOSFETs: nMOS and pMOS. The figure illustrates the cross section of the nMOS and pMOS from the side.\nThe n-type transistors, also known as nMOS, have 2 separate regions of n-type dopants neighbouring the gate (called the **source** and **drain**) that were planted onto a p-type semiconductor substrate base.\nThe pMOS transistors are just the opposite, consisting of a p-type source and drain regions in an n-type substrate base.\n\nNow you're probably wondering: \"why are there 2 flavors of MOSFETs and what's the difference between how they function?\"\nBefore I get into that, I want to first dive into the nMOS transistor and how the components we learned about earlier come together to operate this transistor.\nAfter that, I can explain how pMOS constructions and operations contrast from nMOS.\n\n### Working principle of nMOS\n\nThe source and drain of a MOSFET are both connected to regions of n-type dopants that are embedded into the top of the p-type wafer substrate like shown in the diagram.\nDoesn't this look familiar? The n-type regions that were embedded into the p-type substrate actually create two back to back **diodes** within the MOSFET from source to body and drain to body.\nThe PN junction between these regions and the substrate actually form a depletion region which blocks electrons from crossing when there is no voltage applied across the source and diode.\n\n> **When the gate (the switch of the MOSFET) is OFF but there is a voltage applied across the source and drain of an nMOS, what is stopping current from flowing through?**\n\n> Before I begin explaining, it might be helpful to revisit how diodes work and understand the pulling voltage intuition better.\n> So when the gate is electrically neutral, meaning there has been no voltage applied to it yet, but there is a voltage across the source and drain, MOSFETs block current from flowing through as designed to.\n> The question is not why, but how? Think of how the electrons are moving. The voltage applied pulls the electrons from the ground which the drain is attached to.\n> Basically, electrons within the drain experience a pulling force from the ground which pull electrons away from the PN junction making the depletion region wider.\n> In simple terms, the current is trying to flow through a reverse biased diode which we have learned acts as an insulator, therefore blocking the current from \"leaking\" from the drain into the floor of the chip.\n> This is a brief explanation as to how reverse biased diodes block current, read the notes on diodes for more in depth explanations.\n> Ultimately, for a diode to pass current, it needs to be forward biased (p-type region has a higher voltage), but in the case of an nMOS transistor the p-type substrate is connected to Ground (0V).\n> No matter how much voltage is provided to the drain, the diodes will forever be reverse biased.\n\nIf you haven't noticed already, the metal-oxide-semiconductor sandwich we manufactured earlier actually forms a **capacitor**. Take a look at the figure of an nMOS transistor above.\nThere is a thin insulating dielectric layer (from the silicon dioxide) that separates the two conductive plates which are the polysilicon gate on top and the silicon wafer substrate on the bottom.\nA MOSFET behaves as a voltage-controlled switch in which the gate voltage with the support of the dielectric creates an electric field that turns ON or OFF a connection between the source and drain, hence the name **field effect transistor**.\n\n<div align=\"center\">\n\n\n\n*Visualization of the electric field and inversion layer forming under the gate of an nMOS transistor.*\n\n</div>\n\n> **How does an nMOS allow current flow through the source and drain when the gate is ON?**\n\n> By applying a positive voltage to the gate, the power source (like a battery) is effectively sucking electrons out of the gate material, making the gate positively charged.\n> Since the gate is now positively charged and the dielectric is an insulator, those \"missing\" electrons can't be replaced by the substrate below, instead creating a static electric field.\n> The electric field becomes strong enough for the positively charged gate to act like a magnet for negative charges, pulling the free electrons in the p-type substrate towards the gate, and pushing the holes away.S\n> Since the electric field pulled the free electrons in the p-type substrate, it temporarily disables the depletion layers at the PN junctions.\n> As those electrons pile up against the bottom of the dielectric, they form a thin but highly concentrated layer of negative charge called the **inversion layer**.\n> Since this layer is full of electrons, this specific part of the p-type substrate behaves like n-type silicon and creates a channel (or bridge) for electrons to flow from the source to the drain.\n> Refer to the visual above or watch the [video](https://youtu.be/IcrBqCFLHIY) (where I grabbed it from) to visualize it better.\n\n<div align=\"center\">\n\n\n\n*nMOS transistor in OFF state (left) and ON state (right) showing channel formation.*\n\n</div>\n\n> **If the MOSFET uses the physical structure of a capacitor, the gate stores the charge accumulated when a voltage is applied, so how do you turn the nMOS OFF?**\n\n> Because the gate oxide is such a high quality insulator, if you apply +5V to the gate and then simply cut the wire (leaving the gate floating), the charge has nowhere to go.\n> The nMOS would stay ON indefinitely or until the all the charge slowly leaks out.\n> To actually turn the transistor OFF, you can't just stop applying the positive voltage to the gate, you have to actively drain the charge.\n> In most digital circuits (like the processor in our computers), the gate is connected to a \"driver\" circuit.\n> To turn the nMOS ON, the driver connects the gate to the Supply Voltage (`V_dd`). To turn the nMOS OFF, the driver flips a switch and connects the gate to Ground (V_ss).\n> By connecting the gate to Ground (an ocean of electrons), you provide a low-resistance path for those stored electrons to rush back into the gate, neutralize the positive charge and kill the electric field.\n> Looking into how the driver circuit works is not within the scope of the study but still encouraged if you are interested!\n\n<div align=\"center\">\n\n<img src=\"https://i.imgur.com/HWZnJf8.png\" alt=\"BJT vs MOSFET Comparison\" width=600 />\n\n*Comparison of BJT (current-controlled) and MOSFET (voltage-controlled) transistor operation.*\n\n</div>\n\n> **Why do transistors today use a capacitor structure?**\n\n> Using a capacitor (for the \"Field Effect\") instead of a direct connection (like the \"Current Effect\") in older Bipolar Junction Transistors (BJT) was one of the most important design choices in human history.\n> In older BJT transistors, you have keep pushing current into the base to keep it on which constantly wastes energy.\n> In a MOSFET, however, once you charge the capacitor, no more current flows, and it stays ON for free.\n> That's the main reason why your phone doesn't get hot just sitting in your pocket even with the screen off. I'm not interested in diving deep into BJTs here since it is outdated technology.\n> Also, since the gate is completely insulated by the capacitor's dielectric, we can control larger electric components like massive motors with a tiny microprocessor and not have to worry about any high power leaking back into the processor which could potentially fry it.\n> Capacitative gates are also much easier to shrink, and as you make a MOSFET smaller, you make the \"capacitor\" smaller, making it faster to charge and discharge.\n> This allowed engineers go from fitting a few thousand transistors in a chip to billions today, keeping Moore's Law alive.\n> Watch this [video](https://youtu.be/MiUHjLxm3V0) by Veritasium about modern transistors to understand the latest advancements in transistor technology.\n\n### Working principle of pMOS\n\nMatter of fact, the operations of a pMOS transistor are the exact opposite than that of an nMOS transistor.\nThe only difference between the construction of a pMOS and nMOS is the wafer substrate and the dopant regions.\nIn a pMOS transistor, the wafer body is actually doped as n-type silicon and the source and drain are both connected to regions of the wafer embedded with p-type dopants.\n\n<div align=\"center\">\n\n\n\n*Cross-section showing how nMOS and pMOS transistors coexist on a single p-type wafer using n-wells.*\n\n</div>\n\nThat might make you curious, how do CMOS circuits combine nMOS and pMOS transistors to work together if their entire wafer bodies need to be doped differently?\nThis is where the magic of combining nMOS and pMOS together to give us CMOS technology comes into play.\nWe actually start with a single p-type wafer (connected to Ground), and then \"dig\" little swimming pools of n-type silicon dopants into it called **n-wells**.\npMOS transistors sit within these n-wells as they require an n-type body to operate with more p-type dopants implanted into the n-wells.\n\nLogically, the pMOS functions as the exact opposite from the nMOS transistors, but that is a mere illusion as they actually operate very similarly.\nThe nMOS is OFF when the gate experiences no voltage and ON when a positive voltage is applied to the gate.\nThe pMOS works the opposite way, meaning it is ON when the gate experiences no voltage, but OFF when the gate experience a positive enough voltage.\nHowever, the pMOS doesn't exactly work the way you might initially imagine.\n\nIn the vast majority of CMOS circuits, the source and wafer body are tied to the same wire.\nIn an nMOS, the source and body are both connected to ground because it is the Source's job to provide electrons and the Body's job to reversely bias the diodes with what the source provides and the drain demands.\nConnecting the p-type wafer of an nMOS to ground pushes electrons into the wafer, neutralizing the electric field between the gate and the body, and keeping the diodes reverse biased by holding the depletion regions strong.\nSimilarly, in a pMOS, the source and body are both connected to the point of highest voltage (`V_DD` ~ +5V).\nIn the case of pMOS, it is the Source's job to provide holes to the circuit and the Body's job to provide a high enough voltage to the n-type substrate such that the p-type source and drain can never have a higher voltage, keeping them reverse biased.\nSo the source-body and drain-body diodes will be forever reverse biased by default because the Body is tied to the highest voltage in the circuit.\n\n<div align=\"center\">\n\n<img src=\"https://i.imgur.com/LLJHNQI.png\" alt=\"CMOS Logic Gate Structure\" />\n\n*CMOS logic structure showing the Pull-Up Network (pMOS) and Pull-Down Network (nMOS).*\n\n</div>\n\nHowever, since the substrate is tied to a high positive voltage, when the gate is electrically neutral (0V), an electric field across the dielectric is formed.\nThis electric field points in the opposite direction than that of an nMOS and repels the free electrons in the n-type substrate but attracts the holes from the source, drain, and body.\nThis creates a thin inversion layer at the bottom of the dielectric of p-type silicon in the n-type substrate and temporarily disables the depletion layer at the PN junctions.\nThe channel formed also allows holes (positive charge carriers) to flow from the source to the drain.\nIn reality, electrons are jumping from the drain, into the holes of the body, and moving towards the source and current is flowing in the opposite direction.\nConventional current in nMOS transistors flows from drain to source, but source to drain in pMOS transistors.\n\nWhen you provide a positive voltage to the gate that is equal to the source/body, the electric field across the dielectric gets neutralized, and the diodes are back to being reverse biased to block current.\nEven though pMOS is constructed differently, the way the pMOS operates is the exact same as the nMOS.\nHowever the operation of a pMOS creates the \"logical illusion\" of being completely opposite to that of an nMOS transistor.\nUltimately, when the gate is connected to Ground (0V) the pMOS is considered ON, and when the gate is connected to (`V_DD` ~ +5V) it is considered OFF, making the pMOS the logical opposite of the nMOS transistor.\n\n### Why both flavors?\n\nUnfortunately, MOSFETs are not perfect switches. CMOS circuits face a physical limitation called the Threshold Voltage (`V_th`).\nTo understand this, you have to remember that a MOSFET follow the source voltage when it's trying to pass a signal.\nIn an nMOS, the gate needs to have a slightly higher voltage than the voltage it is trying to move, and in pMOS, the gate needs to have a slightly lower voltage than the voltage it's trying to move.\nnMOS is very good at passing 0s because whether the gate is \"closed\" or \"wide open\", it passes a strong 0 since the source voltage is already 0.\nWhen trying to pass a +5V signal, the source voltage slowly starts to increase but eventually stops around 4.3V (for a 0.7 threshold voltage) to keep the transistor ON.\nTherefore nMOS transistors are weak when trying to pass 1s.\nOn the contrary, pMOS is good at passing 1s for the exact same reason.\nWhen trying to pass a 0V signal, the source voltage slowly starts to decrease but eventually stops around 0.7 V (because of the threshold) to keep the transistor OFF.\n\nSince nMOS is bad at passing 1s and pMOS is bad at passing 0s, CMOS technology uses them in complementary pairs majority of the time (hence the name \"Complementary MOS\").\nIn digital logic, specifically CMOS, every logic gate is divided into two separate halves that works together: the **Pull-Up Network (PUN)** and the **Pull-Down Network (PDN)**.\nTheir job is to ensure the output pin is always connected to either High (`V_DD`) or Low (`GND`), and never both at the same time.\nI will dive deeper into driver circuits, gate networks, and more in the next study talking about circuits and logic. Stay tuned!\n\n---\n\n# Resources\n\n- [csl.cornell.edu/courses/ece2300](https://www.csl.cornell.edu/courses/ece2300/readings.html)\n- \"Digital Design and Computer Architecture, RISC-V Edition,\" by D. M. Harris and S. L. Harris (Morgan Kaufmann, 2021)\n- [Making logic gates from transistors - YouTube](https://youtu.be/sTu3LwpF6XI)\n- [learn.sparkfun.com (physics of electricity)](https://learn.sparkfun.com/tutorials/voltage-current-resistance-and-ohms-law/all)\n- [fluke.com/blog/electrical/diode (what is a diode)](https://www.fluke.com/en-us/learn/blog/electrical/what-is-a-diode)\n- [florisera.com/capacitors (what is a capacitor)](https://florisera.com/introduction-to-capacitors/)\n\n---\n\n*Written by BooleanCube :]*\n",

"toc": [

{

"level": 1,

"id": "1-physics-behind-electricity",

"title": "1. Physics Behind Electricity"

},

{

"level": 2,

"id": "voltage-intuition",

"title": "Voltage Intuition"

},

{

"level": 2,

"id": "pulling-voltage-visualizing-current",

"title": "Pulling Voltage (Visualizing Current)"

},

{

"level": 2,

"id": "ohms-law",

"title": "Ohm's Law"

},

{

"level": 1,

"id": "2-device-components",

"title": "2. Device Components"

},

{

"level": 2,

"id": "cmos-transistors",

"title": "CMOS Transistors"

},

{

"level": 3,

"id": "semiconductors",

"title": "Semiconductors"

},

{

"level": 3,

"id": "diodes",

"title": "Diodes"

},

{

"level": 3,

"id": "capacitors",

"title": "Capacitors"

},

{

"level": 3,

"id": "current-rectification",

"title": "Current Rectification"

},

{

"level": 2,

"id": "nmos-vs-pmos",

"title": "nMOS vs pMOS"

},

{

"level": 3,

"id": "working-principle-of-nmos",

"title": "Working principle of nMOS"

},

{

"level": 3,

"id": "working-principle-of-pmos",

"title": "Working principle of pMOS"

},

{

"level": 3,

"id": "why-both-flavors",

"title": "Why both flavors?"

},

{

"level": 1,

"id": "resources",

"title": "Resources"

}

]

},

{

"title": "Cracking The Technical Interview",

"summary": "Learn my step by step outline of a perfect technical interview.",

"tags": [

"technical",

"guide",

"programming"

],

"date": 1762178202000,

"cover": "https://i.imgur.com/ABSMW3G.png",

"hidden": false,

"slug": "technical-interviews",