mhc is a next-generation PyTorch library that reimagines residual connections. Instead of simple one-to-one skips, mHC learns to dynamically mix multiple historical network states through geometrically constrained manifolds, bringing Honey Badger toughness to your gradients.

| 🚀 High Performance | 🧠 Smart Memory | 🛠️ Drop-in Ease |

|---|---|---|

| Reach deeper than ever before with optimized gradient flow. | Dynamically mix past states for richer feature representation. | Transform any model to mHC with a single line of code. |

“We recommend uv for faster and reproducible installs, but standard pip is fully supported.”

# Using pip (standard)

pip install mhc# Using uv (faster, recommended)

uv pip install mhc# Visualization utilities

pip install "mhc[viz]"

uv pip install "mhc[viz]"

# TensorFlow support

pip install "mhc[tf]"

uv pip install "mhc[tf]"import torch

from mhc import MHCSequential

# Create a model with mHC skip connections

model = MHCSequential([

nn.Linear(64, 64),

nn.ReLU(),

nn.Linear(64, 64),

nn.ReLU(),

nn.Linear(64, 32)

], max_history=4, mode="mhc", constraint="simplex")

# Use it like any PyTorch model

x = torch.randn(8, 64)

output = model(x)Transform any model to use mHC with one line:

from mhc import inject_mhc

import torchvision.models as models

model = models.resnet50(pretrained=True)

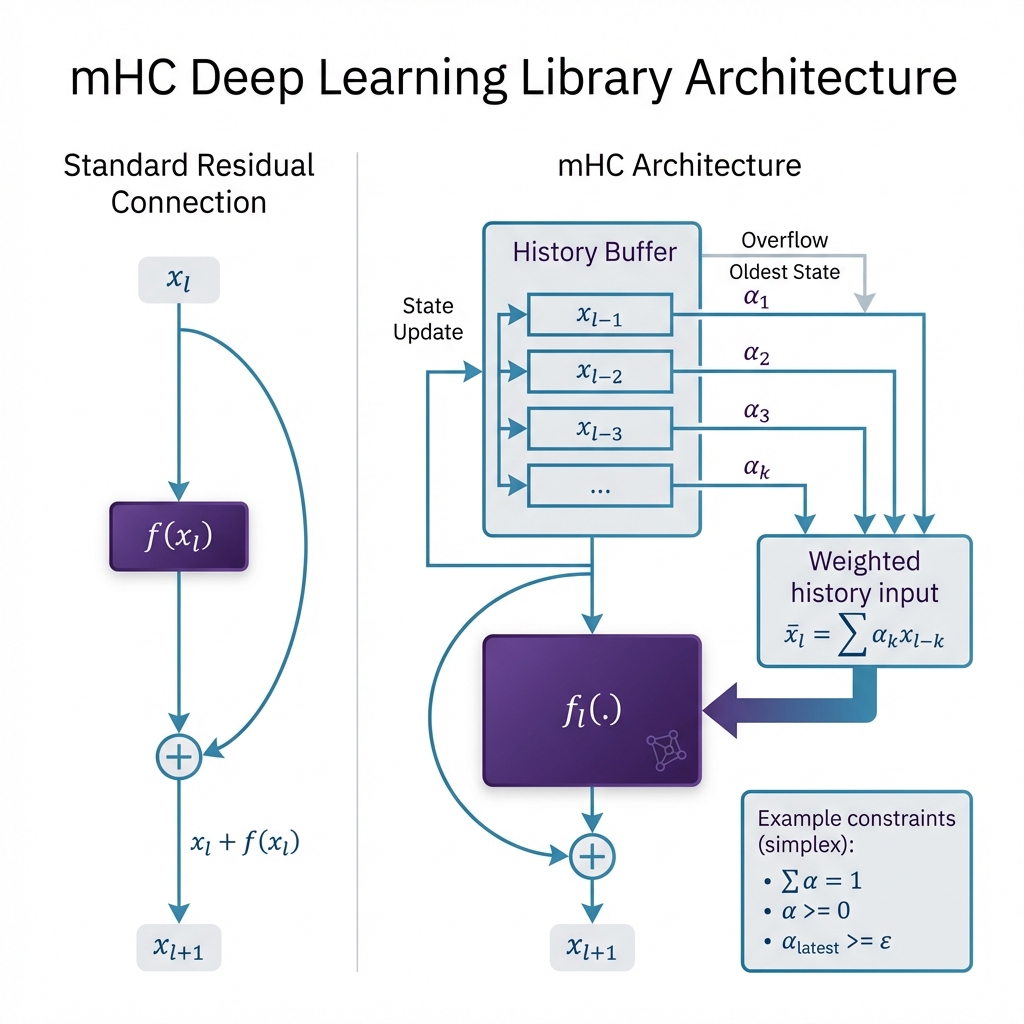

inject_mhc(model, target_types=nn.Conv2d, max_history=4)Standard residual connections only look one step back:

mHC implements a history-aware manifold that mixes a sliding window of

Where:

-

$\alpha_{l,k}$ : Learned mixing weights optimized for feature relevance. - Constraints: Weights are projected onto stable manifolds (Simplex, Identity-preserving, or Doubly Stochastic) to ensure mathematical convergence.

| Benefit | Description |

|---|---|

| Deep Stability | Geometric constraints prevent gradient explosion even at 200+ layers. |

| Feature Fusion | Multi-history mixing allows layers to recover lost spatial or semantic info. |

| Adaptive Flow | The network learns which historical states are most important for the current layer. |

Experiments with 50-layer networks show:

- ✅ 2x Faster Convergence compared to standard ResNet on deep MLPs.

- ✅ Superior Gradient Stability through geometric manifold constraints.

- ✅ Minimal Overhead (~10% additional compute for 4x history).

Tip

Run the benchmark yourself: uv run python experiments/benchmark_stability.py

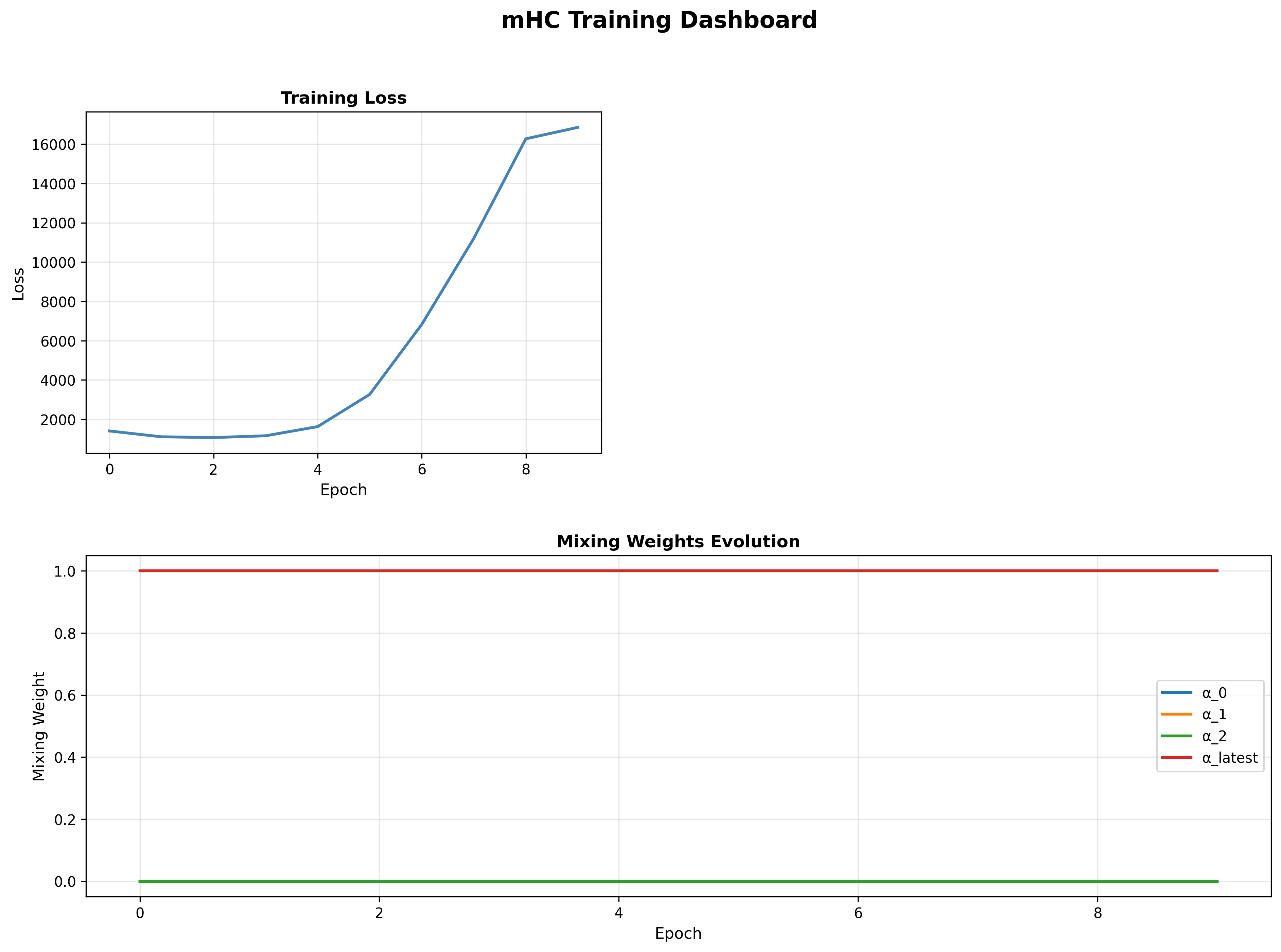

| Training Dashboard | History Evolution |

|---|---|

|

|

| Loss curves & weight dynamics | Learned coefficients over time |

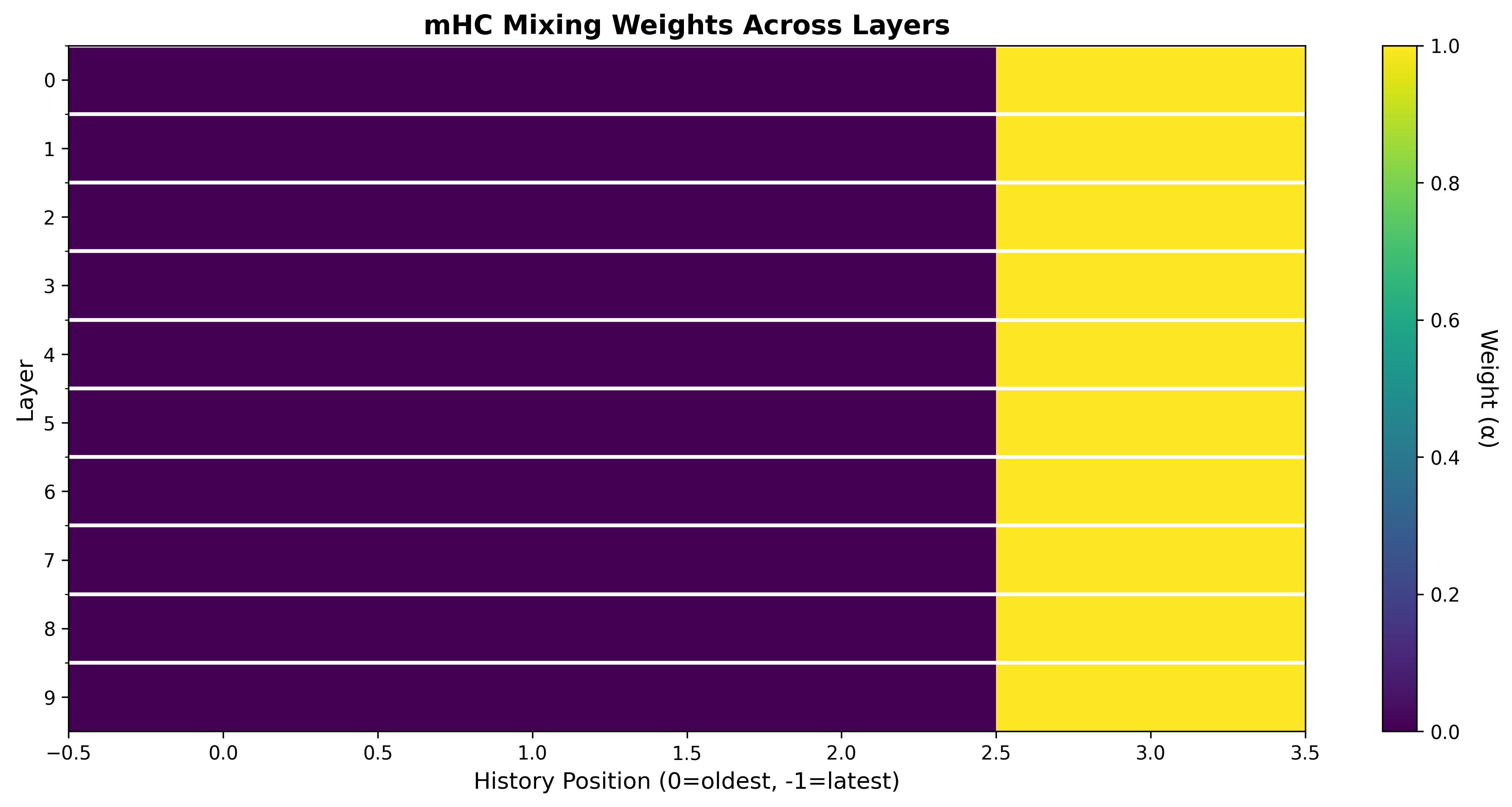

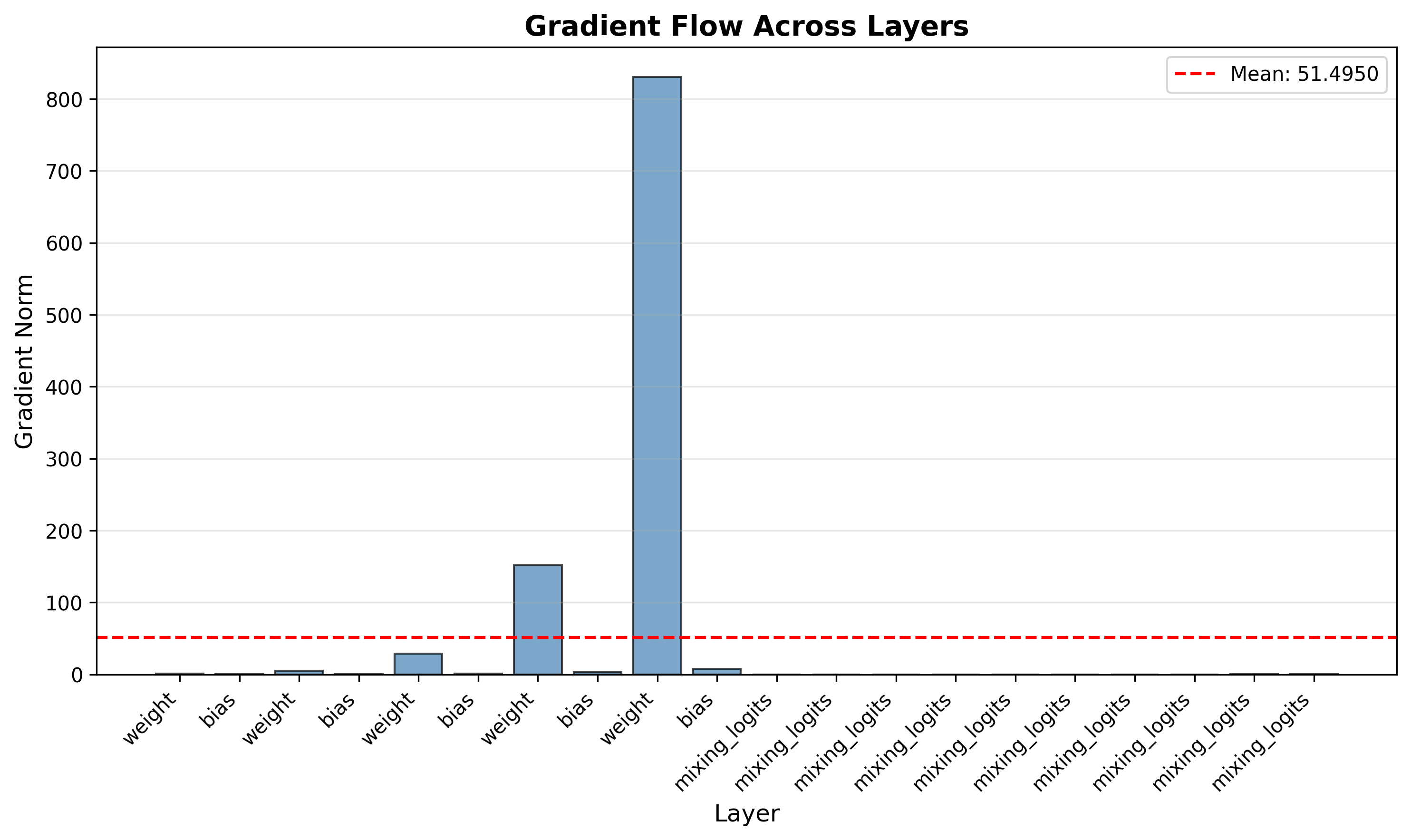

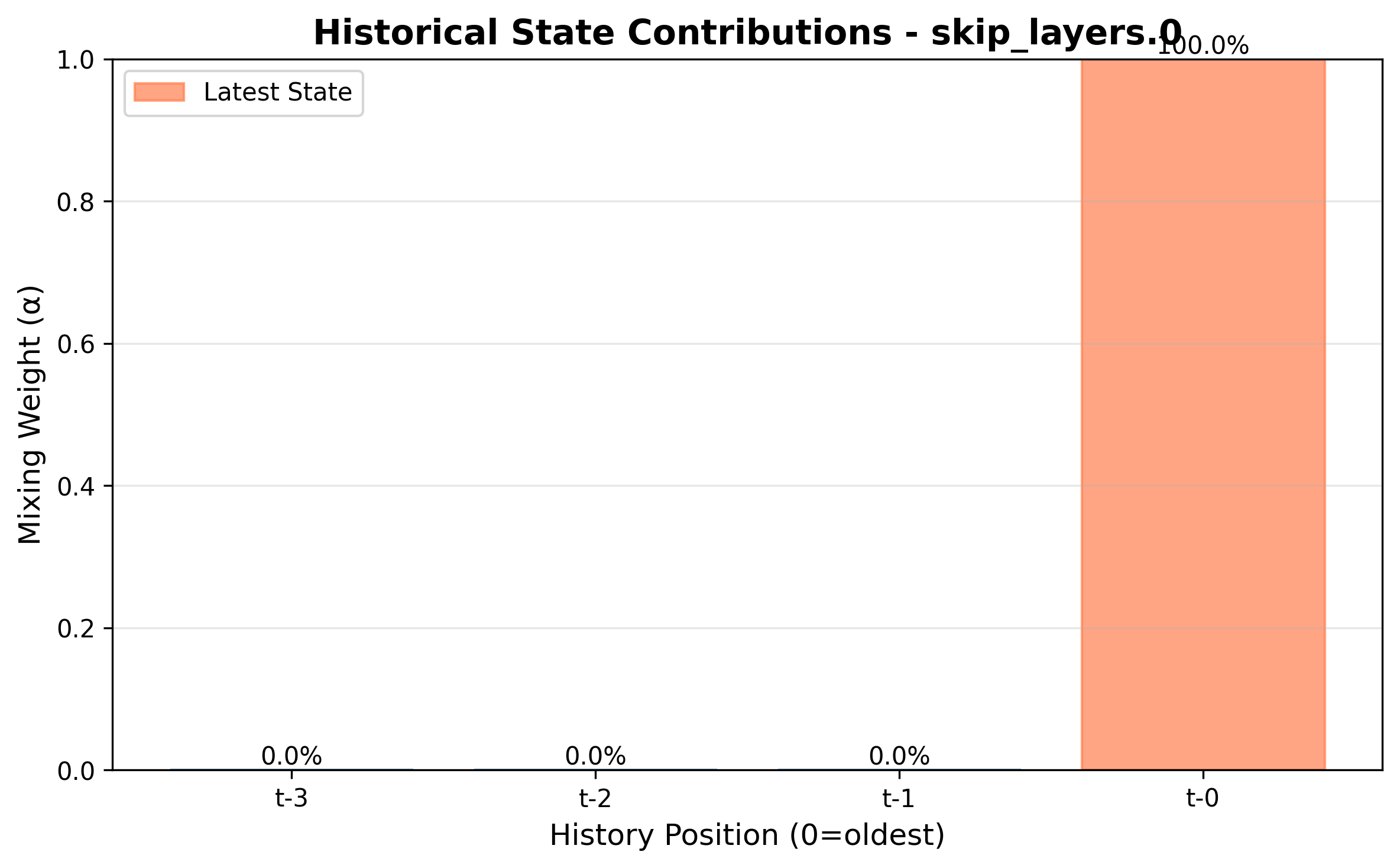

| Gradient Flow | Feature Contribution |

|---|---|

|

|

| Improved backpropagation signal | Single layer state importance |

MHC/

├── mhc/ # Core package

│ ├── constraints/ # Mathematical projections

│ ├── layers/ # mHC Skip implementations

│ ├── tf/ # TensorFlow compatibility

│ └── utils/ # Injection & logging tools

├── tests/ # Robust PyTest suite

├── docs/ # Documentation sources

└── examples/ # Quick-start notebooks

For contributors cloning the repository:

git clone https://github.com/gm24med/MHC.git

cd MHC

# Using uv (recommended for dev)

uv pip install -e ".[dev]"

# Or standard pip

pip install -e ".[dev]"