diff --git a/docs/source/en/_toctree.yml b/docs/source/en/_toctree.yml

index 0775290de9e2..93d9886d85ea 100644

--- a/docs/source/en/_toctree.yml

+++ b/docs/source/en/_toctree.yml

@@ -441,6 +441,10 @@

title: Encoder Decoder Models

- local: model_doc/ernie

title: ERNIE

+ - local: model_doc/ernie4_5

+ title: Ernie4_5

+ - local: model_doc/ernie4_5_moe

+ title: Ernie4_5_MoE

- local: model_doc/ernie_m

title: ErnieM

- local: model_doc/esm

diff --git a/docs/source/en/model_doc/ernie4_5.md b/docs/source/en/model_doc/ernie4_5.md

new file mode 100644

index 000000000000..b350b9d429ae

--- /dev/null

+++ b/docs/source/en/model_doc/ernie4_5.md

@@ -0,0 +1,99 @@

+

+

+

+

+# Ernie 4.5

+

+## Overview

+

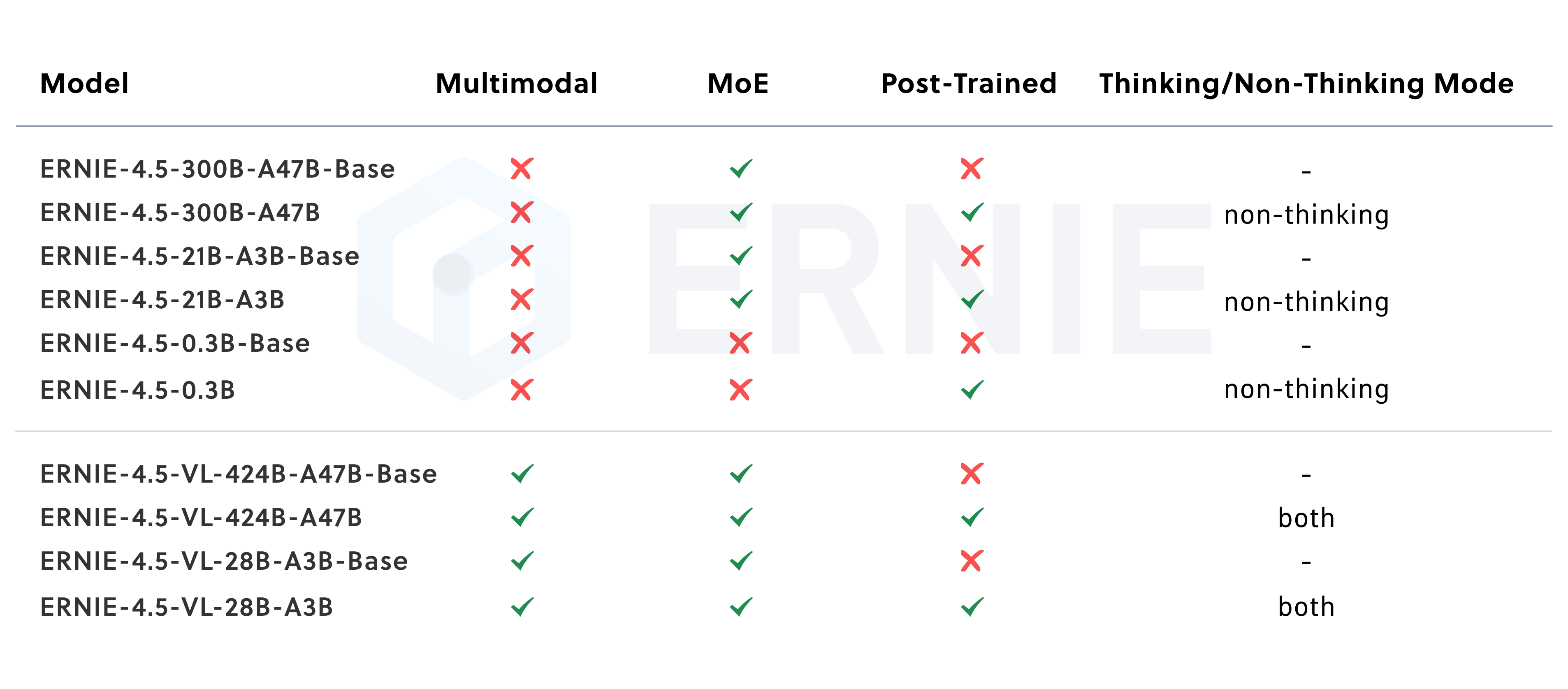

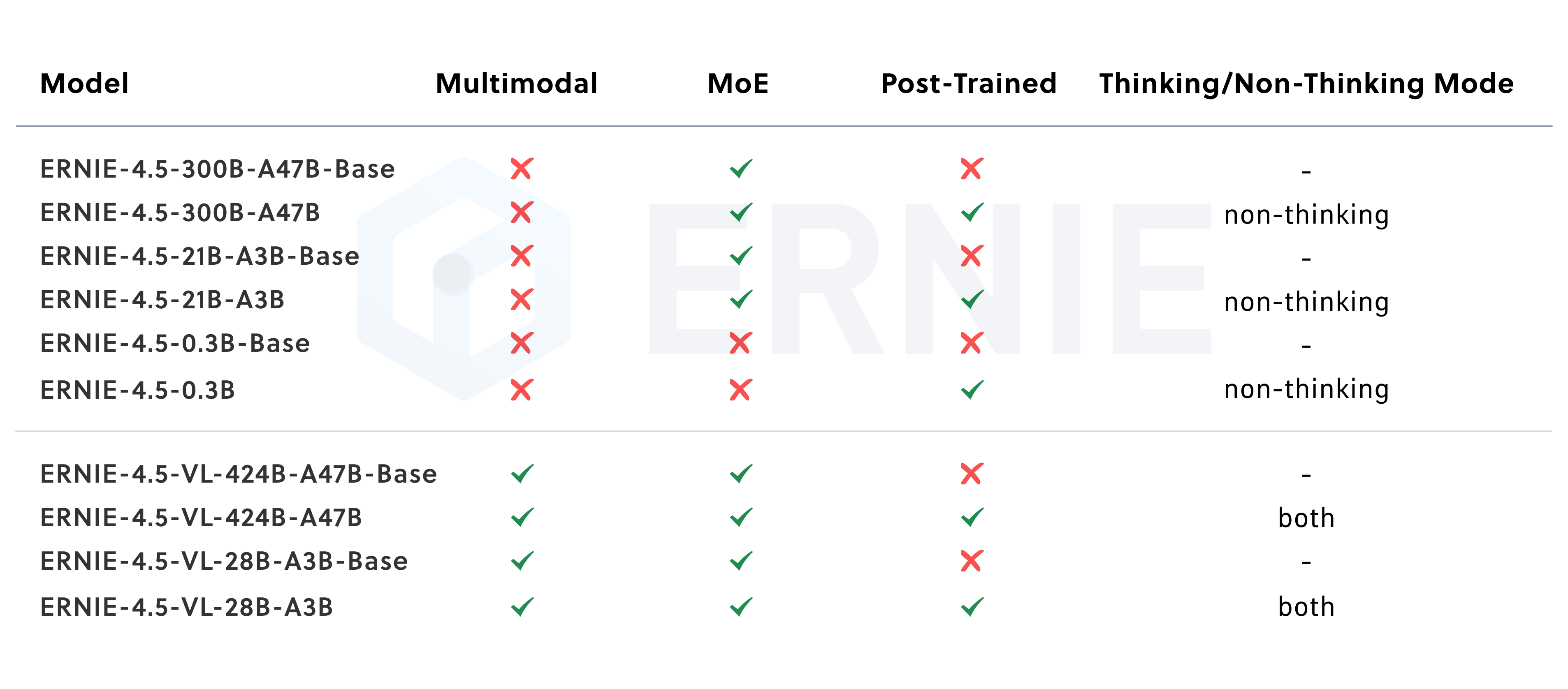

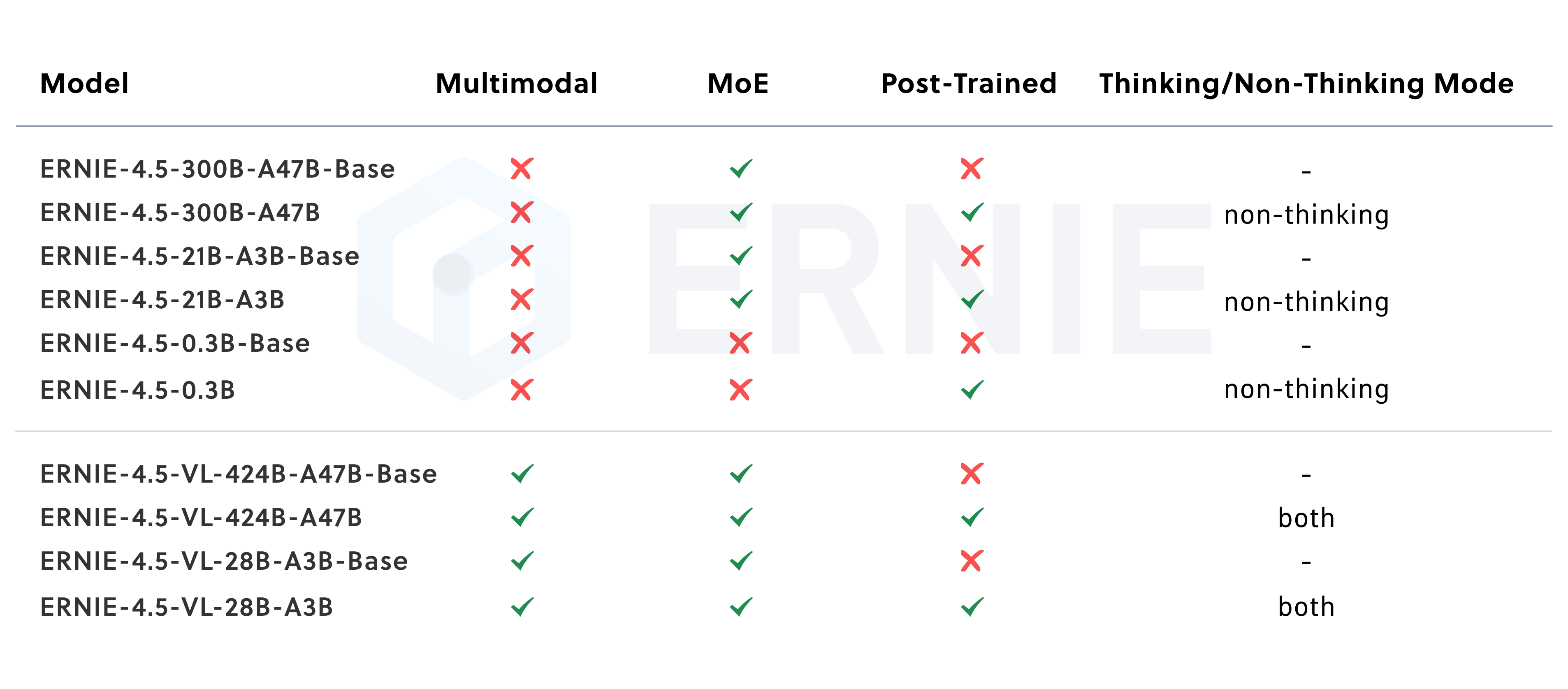

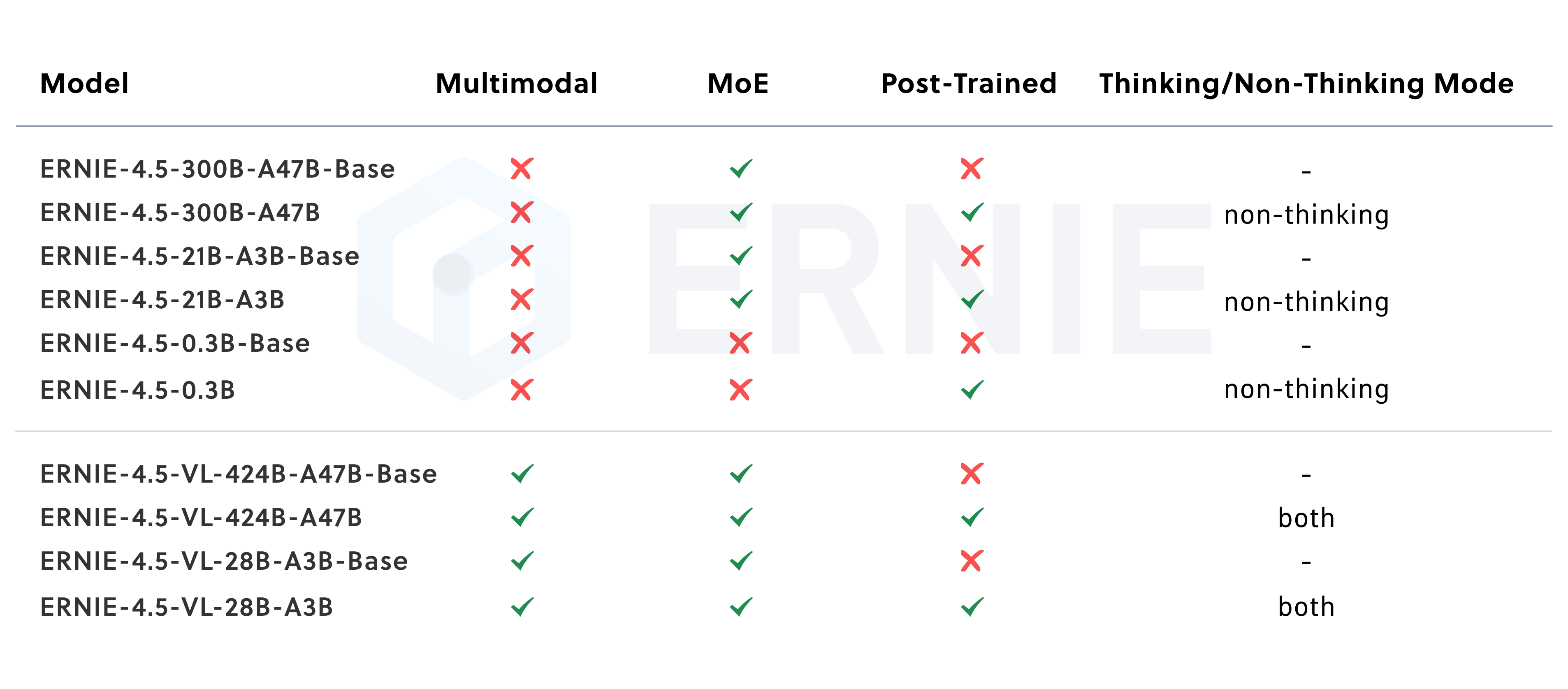

+The Ernie 4.5 model was released in the [Ernie 4.5 Model Family](https://ernie.baidu.com/blog/posts/ernie4.5/) release by baidu.

+This family of models contains multiple different architectures and model sizes. This model in specific targets the base text

+model without mixture of experts (moe) with 0.3B parameters in total. It uses the standard [Llama](./llama.md) at its core.

+

+Other models from the family can be found at [Ernie 4.5 MoE](./ernie4_5_moe.md).

+

+

+

+

+

+

+

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+  +

+ +

+  +

+  +

+  +

+  +

+