sstable: push filter check inside cache#5740

sstable: push filter check inside cache#5740RaduBerinde wants to merge 1 commit intocockroachdb:masterfrom

Conversation

Previously, every bloom filter check in `SeekPrefixGE` required: 1. Getting the filter block from cache (atomic refcount increment) 2. Calling `MayContain` on the filter data (a very fast operation) 3. Releasing the block (atomic refcount decrement) 4. Updating hit counter (atomic increment) For a simple bloom filter check (a few hash operations), the atomic refcount operations represent significant overhead (especially when there are a small number of very hot filters in the upper LSM levels). Add a new `cache.Handle.TableFilterMayContain` method that performs the filter check while holding the cache's read lock, without incrementing/decrementing the refcount. The value is safe to access while holding the read lock since eviction requires the write lock. When the filter block is not in cache, the code falls back to the existing `readFilterBlock` path which handles reading from disk and populating the cache. We no longer record these accesses as cache hits for statistics purposes. We still record misses, and since we now separate statistics by block type (and level), we'll still know what percentage of overall misses are due to filter blocks.

a5f2620 to

9a25ee7

Compare

petermattis

left a comment

petermattis

left a comment

There was a problem hiding this comment.

@petermattis made 1 comment.

Reviewable status: 0 of 5 files reviewed, 1 unresolved discussion (waiting on @RaduBerinde and @sumeerbhola).

internal/cache/clockpro.go line 209 at r1 (raw file):

e.referenced.Store(true) } return true, filter.MayContain(v.buf[dataOffset:], filterKey)

It's unfortunate that we're pushing knowledge of this MayContains interface into the cache. Is there a structure where we pass in a closure instead? Something like "fn func(data []byte)". The closure would have to capture the TableFilterDecoder and filterKey variables which would be problematic allocations. Perhaps there could be a small struct that contains the state needed by the closure and we can use a sync.Pool for that struct.

|

Previously, petermattis (Peter Mattis) wrote…

I wouldn't want a pool operation for this.. I'll try to think of something (perhaps cache the callback in the Reader). But I don't want to encourage arbitrary function execution inside the lock either, so I'm not sure it's much better in terms of API. Another option would be an Acquire/Releaee type API, though that's prone to be abused too. |

RaduBerinde

left a comment

RaduBerinde

left a comment

There was a problem hiding this comment.

@RaduBerinde made 1 comment.

Reviewable status: 0 of 5 files reviewed, 1 unresolved discussion (waiting on @petermattis and @sumeerbhola).

internal/cache/clockpro.go line 209 at r1 (raw file):

Previously, RaduBerinde wrote…

I wouldn't want a pool operation for this.. I'll try to think of something (perhaps cache the callback in the Reader). But I don't want to encourage arbitrary function execution inside the lock either, so I'm not sure it's much better in terms of API. Another option would be an Acquire/Releaee type API, though that's prone to be abused too.

I will move this to draft and come back to it later. I think it can be done nicely with something along the lines of

func QuickAccess<Arg any, Res any> (c *Cache, fileNum base.DiskFileNum, offset uint64, fn func(Arg, []byte) Res) (_ Res, found bool)

We can pass whatever args we need to the function via Arg without having to capture them.

I found some other things along the way that I will work on at the same time:

- filter metrics add another per-access atomic ops and they are not particularly useful as they are (I'd rather have sharded counters separated by level)

- TableFilterDecoder could be a struct that has a

mayContainfuncfields since the decoder is a stateless singleton - we could be initializing some state in the block metadata once instead of "decoding" stuff from the filter each time (though it's minor)

- we could be using

xsync.RBLockinstead ofRWMutex(which shards the reader counter)

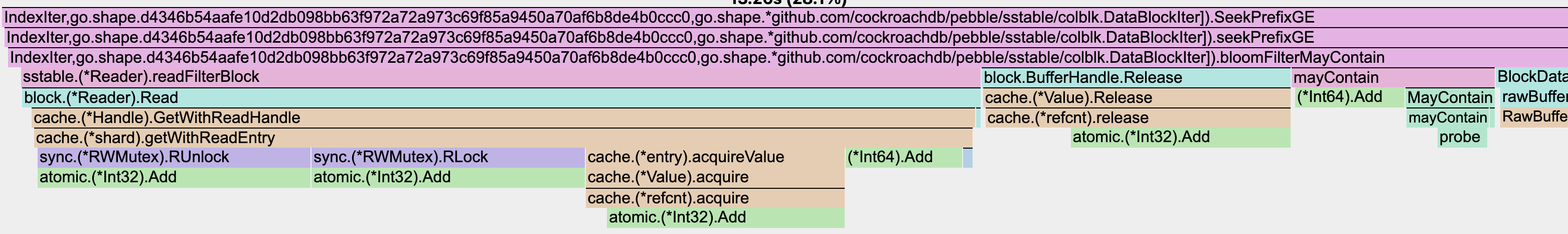

This is from a benchmark I just wrote: it's a bit pessimistic as 8 workers all access the same filter, but it's pretty crazy how small the probe part actually is:

petermattis

left a comment

petermattis

left a comment

There was a problem hiding this comment.

@petermattis made 1 comment.

Reviewable status: 0 of 5 files reviewed, 1 unresolved discussion (waiting on @RaduBerinde and @sumeerbhola).

internal/cache/clockpro.go line 209 at r1 (raw file):

Previously, RaduBerinde wrote…

I will move this to draft and come back to it later. I think it can be done nicely with something along the lines of

func QuickAccess<Arg any, Res any> (c *Cache, fileNum base.DiskFileNum, offset uint64, fn func(Arg, []byte) Res) (_ Res, found bool)We can pass whatever args we need to the function via

Argwithout having to capture them.I found some other things along the way that I will work on at the same time:

- filter metrics add another per-access atomic ops and they are not particularly useful as they are (I'd rather have sharded counters separated by level)

- TableFilterDecoder could be a struct that has a

mayContainfuncfields since the decoder is a stateless singleton- we could be initializing some state in the block metadata once instead of "decoding" stuff from the filter each time (though it's minor)

- we could be using

xsync.RBLockinstead ofRWMutex(which shards the reader counter)This is from a benchmark I just wrote: it's a bit pessimistic as 8 workers all access the same filter, but it's pretty crazy how small the

probepart actually is:

Ack that using a sync.Pool may be problematic (too slow) for this usage. QuickAccess looks good.

Last year you speculated that xsync.RBMutex might be useful for contended blocks like the filter block or index block: #5029.

|

Previously, petermattis (Peter Mattis) wrote…

Yeah, I did run some experiments with it a while back and I didn't notice an obvious gain (I think it was TPCC). It might bring in a more noticeable benefit once we remove the other overhead. |

sumeerbhola

left a comment

sumeerbhola

left a comment

There was a problem hiding this comment.

@sumeerbhola reviewed 5 files and all commit messages, and made 1 comment.

Reviewable status: all files reviewed, 1 unresolved discussion (waiting on @petermattis).

internal/cache/clockpro.go line 209 at r1 (raw file):

Previously, RaduBerinde wrote…

Yeah, I did run some experiments with it a while back and I didn't notice an obvious gain (I think it was TPCC). It might bring in a more noticeable benefit once we remove the other overhead.

This profile was very revealing in terms of the cost of all the "not real work".

The generic function approach sounds good.

Previously, every bloom filter check in

SeekPrefixGErequired:MayContainon the filter data (a very fast operation)For a simple bloom filter check (a few hash operations), the atomic

refcount operations represent significant overhead (especially when

there are a small number of very hot filters in the upper LSM levels).

Add a new

cache.Handle.TableFilterMayContainmethod that performsthe filter check while holding the cache's read lock, without

incrementing/decrementing the refcount. The value is safe to access

while holding the read lock since eviction requires the write lock.

When the filter block is not in cache, the code falls back to the

existing

readFilterBlockpath which handles reading from disk andpopulating the cache.

We no longer record these accesses as cache hits for statistics

purposes. We still record misses, and since we now separate statistics by

block type, we'll still know what percentage of overall misses are due

to filter blocks.