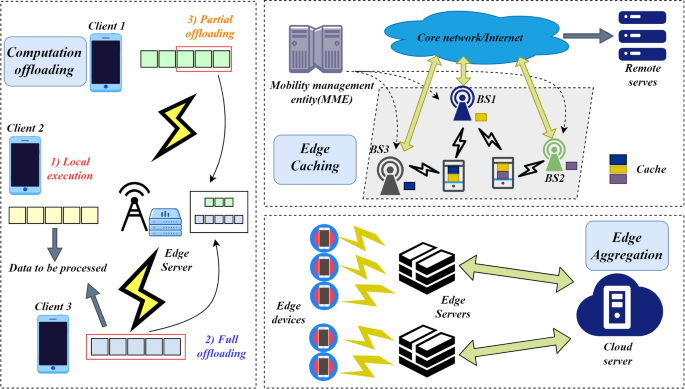

Federated learning network architecture

📑 Table of Contents

The challenge of leveraging vast amounts of data while preserving privacy has become increasingly critical, ever since the World Wide Web was invented in the 90s. Federated Learning (FL) represents a paradigm shift in machine learning, enabling model training across decentralized devices while keeping data localized. This ultra-efficient approach, which was introduced by Google in 2016, has transformed how we think about privacy-preserving machine learning.

🔍 Key Innovation Points

| Aspect | Traditional ML | Federated Learning |

|---|---|---|

| Data Location | Centralized servers | Distributed across devices |

| Privacy Risk | High exposure of raw data | Minimal data exposure |

| Network Usage | Constant data transfer | Optimized model updates |

| Scalability | Limited by central resources | Scales with device network |

| Real-time Updates | Batch processing delays | Immediate local adaptation |

| Infrastructure Cost | High central computing costs | Distributed computing costs |

| Data Freshness | Periodic updates | Real-time learning |

| Regulatory Compliance | Complex data handling | Built-in privacy |

| Model Adaptation | Global updates only | Local + global optimization |

| Resource Utilization | Centralized bottlenecks | Distributed efficiency |

The concept of federated learning was first introduced by Google in 2016 as a solution to improve mobile keyboard prediction while maintaining user privacy. Since then, it has evolved into a comprehensive framework for privacy-preserving distributed machine learning, addressing challenges across various industries and use cases.

The foundation of federated learning lies in its unique approach to distributed computing and privacy preservation. This groundbreaking approach lets machine learning models learn from data spread across multiple locations while protecting individual privacy and ensuring people keep control over their own data.

🎯 Core Principles and Methodology

| Principle | Description | Benefit | Implementation Strategy |

|---|---|---|---|

| Data Locality | Training data remains on local devices | Enhanced privacy | Edge computing integration |

| Distributed Training | Models learn from multiple sources simultaneously | Better representation | Asynchronous learning protocols |

| Privacy Preservation | Personal data never leaves the user's device | Regulatory compliance | Encryption and differential privacy |

| Collaborative Learning | Global models benefit from diverse data sources | Improved accuracy | Federated averaging algorithms |

| Model Personalization | Local adaptations of global models | Better user experience | Hybrid learning approaches |

| Resource Optimization | Efficient use of distributed computing power | Cost effectiveness | Adaptive computation allocation |

| Continuous Learning | Models evolve with new data | Real-time improvement | Progressive learning techniques |

| Fault Tolerance | System resilience to device failures | Robust operation | Redundancy and checkpointing |

-

Model Initialization

- Global model architecture definition

- Initial weight distribution

- Hyperparameter configuration

-

Local Training

- Device-specific data processing

- Mini-batch optimization

- Local model adaptation

-

Update Aggregation

- Secure weight collection

- Contribution weighting

- Model averaging

-

Model Distribution

- Optimized update delivery

- Version control

- Consistency verification

Federated learning systems rely on a network of interconnected components working seamlessly together. Take a closer look at their architecture and the essential building blocks that make them function.

🔧 System Architecture Deep Dive

class FederatedLearningSystem:

def __init__(self):

self.global_model = None

self.local_models = {}

self.aggregation_strategy = None

self.privacy_mechanism = None

self.communication_protocol = None

def initialize_system(self):

self.setup_privacy_mechanisms()

self.configure_communication()

self.initialize_global_model()

def distribute_model(self, clients):

for client in clients:

self.local_models[client] = self.create_local_instance()

self.configure_client_resources(client)

def aggregate_models(self):

weights = []

contributions = []

for client, model in self.local_models.items():

client_weights = model.get_weights()

client_contribution = self.evaluate_contribution(client)

weights.append(self.apply_privacy_mechanisms(client_weights))

contributions.append(client_contribution)

return self.weighted_aggregate(weights, contributions)

def evaluate_contribution(self, client):

return {

'data_quality': self.assess_data_quality(client),

'computation_power': self.measure_resources(client),

'reliability': self.calculate_reliability(client)

}

def weighted_aggregate(self, weights, contributions):

normalized_weights = self.normalize_contributions(contributions)

aggregated_model = self.secure_weighted_average(weights, normalized_weights)

return self.verify_model_integrity(aggregated_model)| Component | Primary Role | Secondary Functions | Integration Points |

|---|---|---|---|

| Global Aggregator | Model averaging | Version control | Client communication |

| Local Trainer | On-device learning | Resource management | Data preprocessing |

| Privacy Engine | Data protection | Noise injection | Model updates |

| Communication Manager | Update coordination | Bandwidth optimization | Security protocols |

| Resource Monitor | System optimization | Load balancing | Performance metrics |

| Security Validator | Update verification | Attack detection | Privacy enforcement |

-

Initialization Phase

def system_initialization(): configure_security_protocols() establish_communication_channels() verify_client_capabilities() distribute_initial_model()

-

Training Phase

def training_cycle(): for round in training_rounds: select_participating_clients() distribute_current_model() collect_local_updates() verify_update_integrity() aggregate_contributions() update_global_model()

-

Optimization Phase

def optimization_process(): analyze_system_performance() adjust_hyperparameters() optimize_resource_allocation() update_security_parameters()

The robust security framework of federated learning provides multiple layers of protection for sensitive data, establishing a comprehensive defense against various privacy threats and security vulnerabilities.

🔒 Advanced Security Framework

| Security Layer | Implementation | Threat Protection | Compliance Impact |

|---|---|---|---|

| Data Locality | Edge Computing | Data Theft | GDPR, CCPA |

| Differential Privacy | ε-DP Algorithms | Model Inversion | HIPAA |

| Secure Aggregation | Homomorphic Encryption | Man-in-the-Middle | PCI DSS |

| Model Anonymization | Gradient Clipping | Membership Inference | FERPA |

| Secure Enclaves | TEE Integration | Side-Channel Attacks | ISO 27001 |

| Cryptographic Protocols | Multi-Party Computation | Collusion Attacks | SOC 2 |

class SecureFederatedLearning:

def __init__(self):

self.encryption_scheme = HomomorphicEncryption()

self.differential_privacy = DifferentialPrivacy()

self.secure_aggregation = SecureAggregationProtocol()

def secure_update(self, model_update, client_id):

# Apply differential privacy

noised_update = self.differential_privacy.add_noise(

model_update,

sensitivity=self.calculate_sensitivity(model_update)

)

# Encrypt the update

encrypted_update = self.encryption_scheme.encrypt(

noised_update,

public_key=self.get_public_key(client_id)

)

# Sign the update

signed_update = self.sign_update(

encrypted_update,

client_id=client_id

)

return signed_update

def aggregate_secure_updates(self, encrypted_updates):

# Verify signatures

valid_updates = [

update for update in encrypted_updates

if self.verify_signature(update)

]

# Homomorphic aggregation

aggregated_update = self.secure_aggregation.aggregate(

valid_updates,

weights=self.calculate_weights(valid_updates)

)

# Decrypt final result

decrypted_result = self.encryption_scheme.decrypt(

aggregated_update,

private_key=self.server_private_key

)

return decrypted_result-

Differential Privacy Implementation

class DifferentialPrivacy: def add_noise(self, data, epsilon=0.1): sensitivity = self.compute_sensitivity(data) noise_scale = sensitivity / epsilon noise = np.random.laplace(0, noise_scale, data.shape) return data + noise

-

Secure Aggregation Protocol

class SecureAggregationProtocol: def aggregate(self, encrypted_updates): # Pairwise masking masked_updates = self.apply_masks(encrypted_updates) # Threshold-based reconstruction reconstructed = self.reconstruct_aggregate(masked_updates) return reconstructed

-

Homomorphic Encryption Integration

class HomomorphicEncryption: def encrypt_gradients(self, gradients): encrypted_grads = [] for grad in gradients: encrypted = self.paillier.encrypt(grad) encrypted_grads.append(encrypted) return encrypted_grads

Implementing federated learning comes with unique complexities that require adaptive strategies and forward-thinking solutions. Here, we take a closer look at the challenges of federated learning deployment and the innovative solutions to overcome them.

⚠️ Challenge Analysis and Solutions

| Challenge Category | Description | Impact | Mitigation Strategies |

|---|---|---|---|

| Communication Overhead | High bandwidth requirements | Slower training | Gradient compression |

| System Heterogeneity | Varying device capabilities | Inconsistent performance | Adaptive computation |

| Statistical Heterogeneity | Non-IID data distribution | Model bias | Personalization layers |

| Device Reliability | Unstable client participation | Training interruption | Asynchronous updates |

| Resource Constraints | Limited device resources | Reduced efficiency | Lightweight models |

| Privacy Concerns | Data leakage risks | Security vulnerabilities | Enhanced encryption |

class ChallengesMitigation:

def __init__(self):

self.compression = GradientCompression()

self.adaptive_compute = AdaptiveComputation()

self.personalization = PersonalizationLayer()

def optimize_communication(self, gradients):

compressed = self.compression.compress(gradients)

prioritized = self.prioritize_updates(compressed)

return self.schedule_transmission(prioritized)

def handle_heterogeneity(self, client_capabilities):

workload = self.adaptive_compute.allocate(client_capabilities)

schedule = self.create_training_schedule(workload)

return self.monitor_execution(schedule)

def manage_statistical_diversity(self, local_data):

distribution = self.analyze_distribution(local_data)

personalized_model = self.personalization.adapt(distribution)

return self.validate_performance(personalized_model)-

Gradient Compression

class GradientCompression: def compress(self, gradients, threshold=0.01): # Quantization quantized = self.quantize_weights(gradients) # Sparsification sparse = self.sparsify(quantized, threshold) # Encoding encoded = self.encode_sparse(sparse) return encoded

-

Adaptive Computation

class AdaptiveComputation: def allocate_resources(self, device_metrics): available_memory = device_metrics['memory'] cpu_power = device_metrics['cpu'] battery_level = device_metrics['battery'] return self.optimize_allocation( available_memory, cpu_power, battery_level )

-

Personalization Layer

class PersonalizationLayer: def adapt_model(self, global_model, local_data): local_patterns = self.extract_patterns(local_data) adaptation_params = self.compute_adaptation(local_patterns) return self.apply_personalization(global_model, adaptation_params)

Federated learning has found practical applications across various industries, proving its versatility and effectiveness in real-world scenarios.

🌐 Industry Applications and Case Studies

| Application | Description | Benefits | Implementation |

|---|---|---|---|

| Disease Prediction | Early detection models | Privacy-compliant analysis | Multi-hospital collaboration |

| Medical Imaging | Diagnostic assistance | Shared expertise | Distributed image processing |

| Drug Discovery | Molecular modeling | Accelerated research | Cross-institution learning |

| Patient Monitoring | Real-time health tracking | Personalized care | Edge device integration |

| Use Case | Implementation | Impact | Security Measures |

|---|---|---|---|

| Fraud Detection | Real-time analysis | Reduced fraud rates | Encrypted transactions |

| Risk Assessment | Distributed modeling | Better accuracy | Secure data handling |

| Trading Strategies | Market prediction | Improved returns | Protected algorithms |

| Credit Scoring | Fair evaluation | Broader inclusion | Privacy preservation |

class MobileFL:

def __init__(self):

self.keyboard_predictor = KeyboardPredictor()

self.voice_recognition = VoiceRecognition()

self.battery_optimizer = BatteryOptimizer()

def optimize_user_experience(self):

# Keyboard prediction

typing_patterns = self.keyboard_predictor.learn_patterns()

# Voice recognition

voice_model = self.voice_recognition.adapt_to_user()

# Battery optimization

power_profile = self.battery_optimizer.create_profile()

return self.integrate_optimizations(

typing_patterns,

voice_model,

power_profile

)| Application | Architecture | Benefits | Challenges |

|---|---|---|---|

| Smart Homes | Edge devices | Real-time response | Device coordination |

| Industrial IoT | Sensor networks | Predictive maintenance | Data synchronization |

| Smart Cities | Distributed sensors | Efficient management | Scale handling |

| Connected Vehicles | Mobile edge | Safety improvements | Latency requirements |

The rapid growth of federated learning continues to shape the future of privacy-preserving machine learning, opening new possibilities and addressing emerging challenges.

🔮 Future Trends and Innovations

| Technology | Description | Potential Impact | Timeline |

|---|---|---|---|

| Quantum FL | Quantum-resistant protocols | Enhanced security | 2-3 years |

| AutoFL | Automated architecture search | Optimized models | 1-2 years |

| Cross-silo FL | Organization collaboration | Broader insights | Current |

| Blockchain FL | Decentralized governance | Trustless systems | 1-2 years |

class FutureFederatedLearning:

def __init__(self):

self.quantum_resistant = QuantumResistantFL()

self.auto_architecture = AutoFLSearch()

self.blockchain_integration = BlockchainFL()

def implement_quantum_resistance(self):

# Quantum-resistant encryption

quantum_keys = self.quantum_resistant.generate_keys()

# Post-quantum protocols

secure_protocol = self.quantum_resistant.establish_protocol()

return self.deploy_quantum_safe_system(

quantum_keys,

secure_protocol

)

def automate_architecture_search(self):

# Neural architecture search

search_space = self.auto_architecture.define_space()

# Performance optimization

optimal_architecture = self.auto_architecture.search(

search_space,

constraints=self.get_constraints()

)

return self.deploy_optimal_model(optimal_architecture)

def integrate_blockchain(self):

# Smart contract deployment

contract = self.blockchain_integration.deploy_contract()

# Consensus mechanism

consensus = self.blockchain_integration.establish_consensus()

return self.setup_blockchain_fl(contract, consensus)| Sector | Prediction | Timeline | Impact |

|---|---|---|---|

| Healthcare | Personalized medicine | 2025 | High |

| Finance | Decentralized ML | 2024 | Medium |

| Automotive | Autonomous systems | 2026 | High |

| IoT | Edge AI proliferation | 2024 | High |

This section provides detailed technical guidance for implementing federated learning systems, including code examples and best practices.

💻 Implementation Guide

import tensorflow as tf

from typing import List, Dict

class FederatedClient:

def __init__(self, local_data):

self.data = local_data

self.model = None

def train_local(self, epochs: int = 5):

history = self.model.fit(

self.data.x,

self.data.y,

epochs=epochs,

verbose=0

)

return self.model.get_weights()

class FederatedServer:

def __init__(self, model_architecture):

self.global_model = model_architecture

self.clients: List[FederatedClient] = []

def aggregate_weights(self, weight_list: List[Dict]):

averaged_weights = [

sum([weights[i] for weights in weight_list]) / len(weight_list)

for i in range(len(weight_list[0]))

]

return averaged_weights-

Model Architecture

def create_model(): model = tf.keras.Sequential([ tf.keras.layers.Dense(128, activation='relu'), tf.keras.layers.Dropout(0.2), tf.keras.layers.Dense(64, activation='relu'), tf.keras.layers.Dense(10, activation='softmax') ]) return model

-

Client Selection

def select_clients(available_clients, fraction=0.1): num_clients = max(1, int(len(available_clients) * fraction)) return np.random.choice( available_clients, num_clients, replace=False )

-

Performance Monitoring

class FederatedMonitor: def __init__(self): self.metrics = {} def track_round(self, round_num, metrics): self.metrics[round_num] = { 'loss': metrics['loss'], 'accuracy': metrics['accuracy'], 'client_participation': metrics['num_clients'], 'communication_cost': metrics['bytes_transferred'] } def generate_report(self): return pd.DataFrame.from_dict(self.metrics, orient='index')

| Category | Practice | Rationale | Implementation |

|---|---|---|---|

| Security | Regular audits | Vulnerability prevention | Automated testing |

| Performance | Gradient compression | Bandwidth optimization | Quantization |

| Reliability | Checkpoint system | Fault tolerance | Regular saves |

| Scalability | Dynamic allocation | Resource efficiency | Load balancing |

Federated learning represents a transformative approach to machine learning that addresses critical privacy concerns while enabling collaborative model training. As the field continues to evolve, we can expect:

🎯 Key Takeaways and Future Outlook

| Aspect | Current State | Future Potential | Action Items |

|---|---|---|---|

| Privacy | Enhanced | Quantum-secure | Implement PQC |

| Efficiency | Improving | Automated optimization | Deploy AutoFL |

| Adoption | Growing | Mainstream | Develop tools |

| Innovation | Active | Breakthrough expected | Research investment |

-

Assessment Phase

- Evaluate data privacy requirements

- Analyze technical capabilities

- Identify use cases

-

Implementation Phase

- Select appropriate FL framework

- Deploy pilot projects

- Monitor performance

-

Optimization Phase

- Fine-tune models

- Enhance security measures

- Scale operations

This article was last updated on March, 2025. For the latest developments in federated learning, please consider recent research papers and industry publications.

- McMahan, B., & Ramage, D. (2017). Federated Learning: Collaborative Machine Learning without Centralized Training Data

- Li, T., et al. (2020). Federated Learning: Challenges, Methods, and Future Directions

- Kairouz, P., et al. (2021). Advances and Open Problems in Federated Learning

- Yang, Q., et al. (2019). Federated Machine Learning: Concept and Applications